mirror of

https://github.com/wakatime/sublime-wakatime.git

synced 2023-08-10 21:13:02 +03:00

Compare commits

53 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 5e17ad88f6 | |||

| 24d0f65116 | |||

| a326046733 | |||

| 9bab00fd8b | |||

| b4a13a48b9 | |||

| 21601f9688 | |||

| 4c3ec87341 | |||

| b149d7fc87 | |||

| 52e6107c6e | |||

| b340637331 | |||

| 044867449a | |||

| 9e3f438823 | |||

| 887d55c3f3 | |||

| 19d54f3310 | |||

| 514a8762eb | |||

| 957c74d226 | |||

| 7b0432d6ff | |||

| 09754849be | |||

| 25ad48a97a | |||

| 3b2520afa9 | |||

| 77c2041ad3 | |||

| 8af3b53937 | |||

| 5ef2e6954e | |||

| ca94272de5 | |||

| f19a448d95 | |||

| e178765412 | |||

| 6a7de84b9c | |||

| 48810f2977 | |||

| 260eedb31d | |||

| 02e2bfcad2 | |||

| f14ece63f3 | |||

| cb7f786ec8 | |||

| ab8711d0b1 | |||

| 2354be358c | |||

| 443215bd90 | |||

| c64f125dc4 | |||

| 050b14fb53 | |||

| c7efc33463 | |||

| d0ddbed006 | |||

| 3ce8f388ab | |||

| 90731146f9 | |||

| e1ab92be6d | |||

| 8b59e46c64 | |||

| 006341eb72 | |||

| b54e0e13f6 | |||

| 835c7db864 | |||

| 53e8bb04e9 | |||

| 4aa06e3829 | |||

| 297f65733f | |||

| 5ba5e6d21b | |||

| 32eadda81f | |||

| c537044801 | |||

| a97792c23c |

155

HISTORY.rst

155

HISTORY.rst

@ -3,6 +3,161 @@ History

|

||||

-------

|

||||

|

||||

|

||||

7.0.10 (2016-09-22)

|

||||

++++++++++++++++++

|

||||

|

||||

- Handle UnicodeDecodeError when looking for python. Fixes #68.

|

||||

- Upgrade wakatime-cli to v6.0.9.

|

||||

|

||||

|

||||

7.0.9 (2016-09-02)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.8.

|

||||

|

||||

|

||||

7.0.8 (2016-07-21)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to master version to fix debug logging encoding bug.

|

||||

|

||||

|

||||

7.0.7 (2016-07-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.7.

|

||||

- Handle unknown exceptions from requests library by deleting cached session

|

||||

object because it could be from a previous conflicting version.

|

||||

- New hostname setting in config file to set machine hostname. Hostname

|

||||

argument takes priority over hostname from config file.

|

||||

- Prevent logging unrelated exception when logging tracebacks.

|

||||

- Use correct namespace for pygments.lexers.ClassNotFound exception so it is

|

||||

caught when dependency detection not available for a language.

|

||||

|

||||

|

||||

7.0.6 (2016-06-13)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.5.

|

||||

- Upgrade pygments to v2.1.3 for better language coverage.

|

||||

|

||||

|

||||

7.0.5 (2016-06-08)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to master version to fix bug in urllib3 package causing

|

||||

unhandled retry exceptions.

|

||||

- Prevent tracking git branch with detached head.

|

||||

|

||||

|

||||

7.0.4 (2016-05-21)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.3.

|

||||

- Upgrade requests dependency to v2.10.0.

|

||||

- Support for SOCKS proxies.

|

||||

|

||||

|

||||

7.0.3 (2016-05-16)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.2.

|

||||

- Prevent popup on Mac when xcode-tools is not installed.

|

||||

|

||||

|

||||

7.0.2 (2016-04-29)

|

||||

++++++++++++++++++

|

||||

|

||||

- Prevent implicit unicode decoding from string format when logging output

|

||||

from Python version check.

|

||||

|

||||

|

||||

7.0.1 (2016-04-28)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.1.

|

||||

- Fix bug which prevented plugin from being sent with extra heartbeats.

|

||||

|

||||

|

||||

7.0.0 (2016-04-28)

|

||||

++++++++++++++++++

|

||||

|

||||

- Queue heartbeats and send to wakatime-cli after 4 seconds.

|

||||

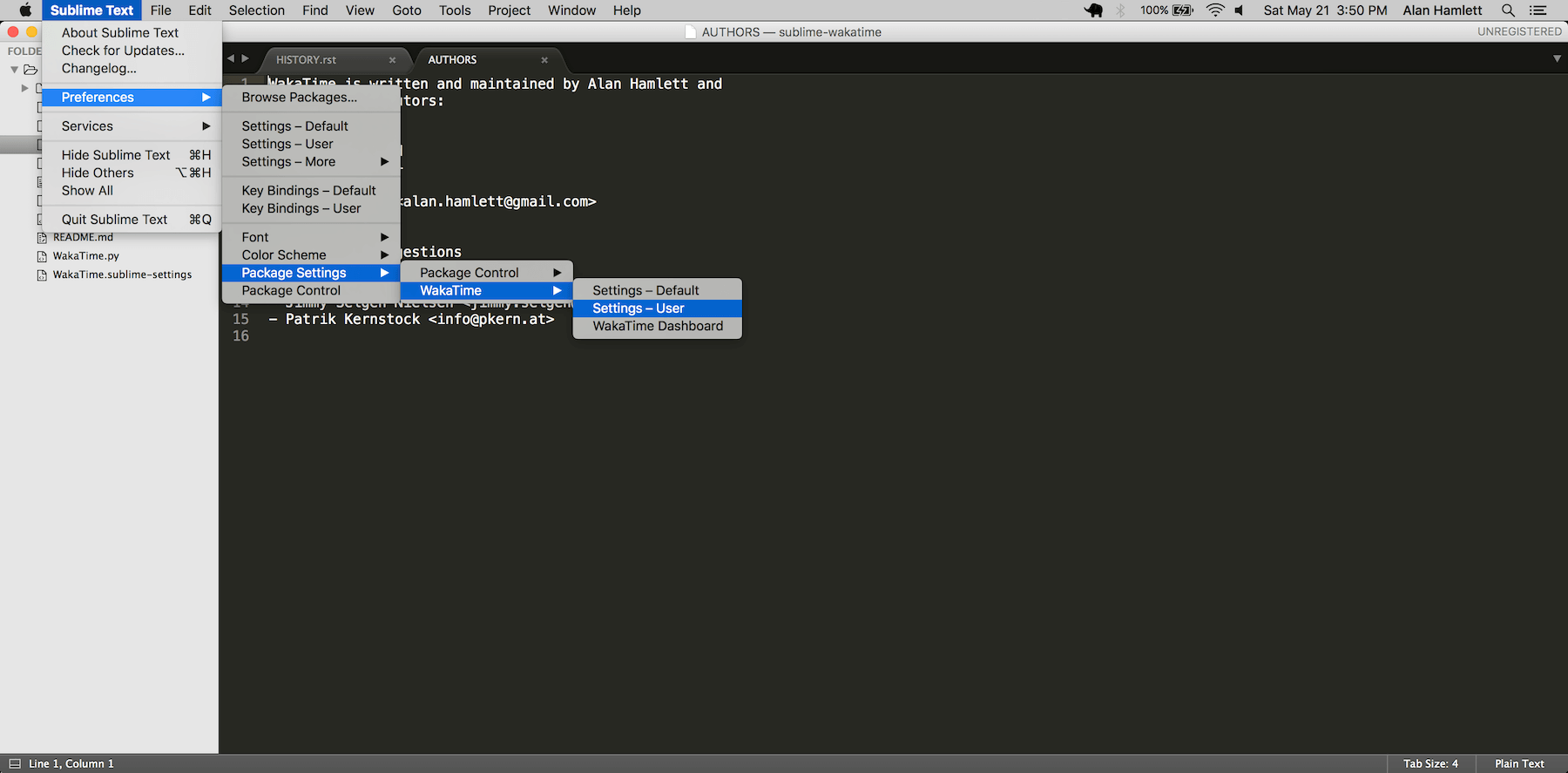

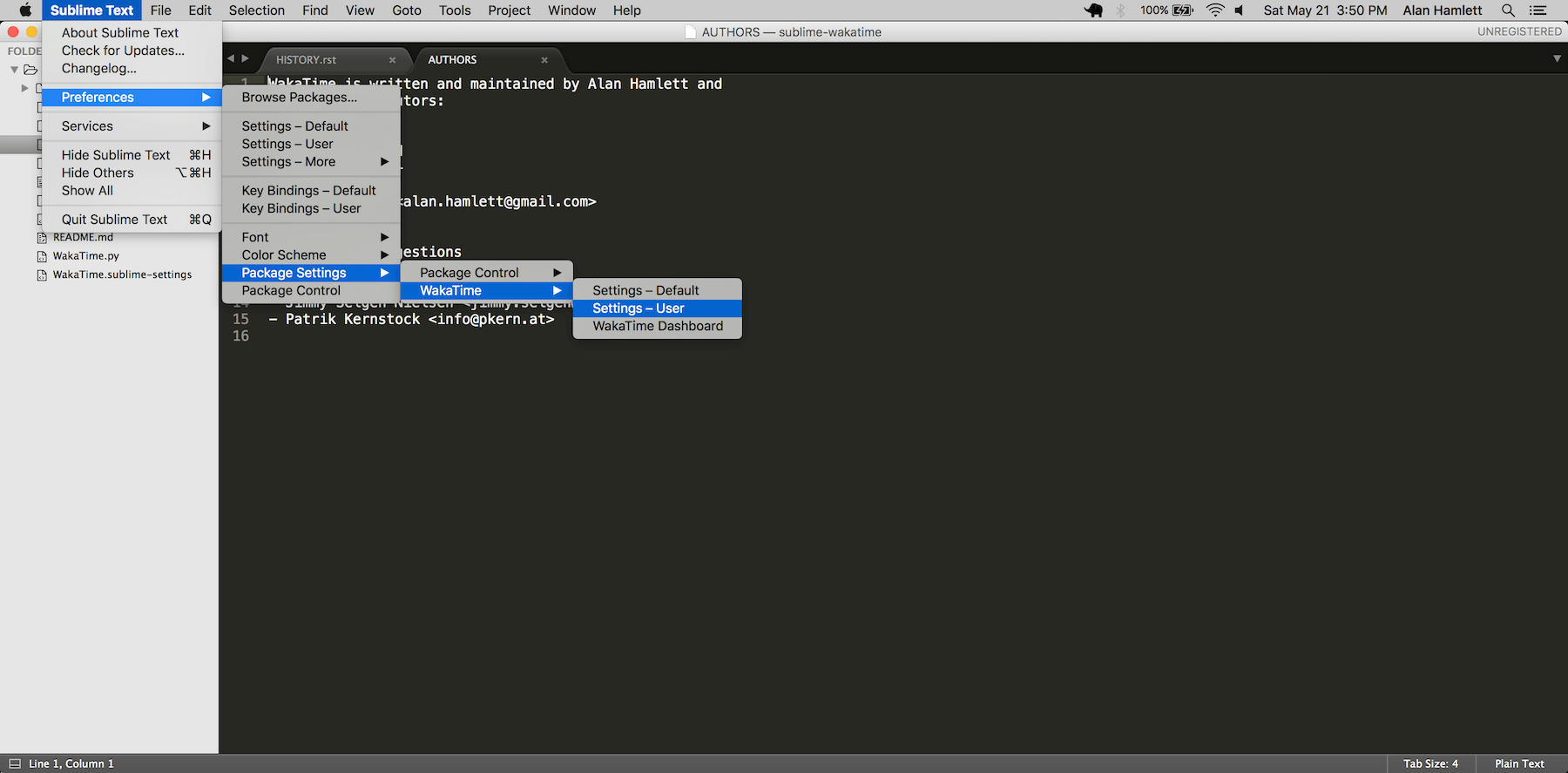

- Nest settings menu under Package Settings.

|

||||

- Upgrade wakatime-cli to v6.0.0.

|

||||

- Increase default network timeout to 60 seconds when sending heartbeats to

|

||||

the api.

|

||||

- New --extra-heartbeats command line argument for sending a JSON array of

|

||||

extra queued heartbeats to STDIN.

|

||||

- Change --entitytype command line argument to --entity-type.

|

||||

- No longer allowing --entity-type of url.

|

||||

- Support passing an alternate language to cli to be used when a language can

|

||||

not be guessed from the code file.

|

||||

|

||||

|

||||

6.0.8 (2016-04-18)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v5.0.0.

|

||||

- Support regex patterns in projectmap config section for renaming projects.

|

||||

- Upgrade pytz to v2016.3.

|

||||

- Upgrade tzlocal to v1.2.2.

|

||||

|

||||

|

||||

6.0.7 (2016-03-11)

|

||||

++++++++++++++++++

|

||||

|

||||

- Fix bug causing RuntimeError when finding Python location

|

||||

|

||||

|

||||

6.0.6 (2016-03-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime-cli to v4.1.13

|

||||

- encode TimeZone as utf-8 before adding to headers

|

||||

- encode X-Machine-Name as utf-8 before adding to headers

|

||||

|

||||

|

||||

6.0.5 (2016-03-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime-cli to v4.1.11

|

||||

- encode machine hostname as Unicode when adding to X-Machine-Name header

|

||||

|

||||

|

||||

6.0.4 (2016-01-15)

|

||||

++++++++++++++++++

|

||||

|

||||

- fix UnicodeDecodeError on ST2 with non-English locale

|

||||

|

||||

|

||||

6.0.3 (2016-01-11)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime-cli core to v4.1.10

|

||||

- accept 201 or 202 response codes as success from api

|

||||

- upgrade requests package to v2.9.1

|

||||

|

||||

|

||||

6.0.2 (2016-01-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime-cli core to v4.1.9

|

||||

- improve C# dependency detection

|

||||

- correctly log exception tracebacks

|

||||

- log all unknown exceptions to wakatime.log file

|

||||

- disable urllib3 SSL warning from every request

|

||||

- detect dependencies from golang files

|

||||

- use api.wakatime.com for sending heartbeats

|

||||

|

||||

|

||||

6.0.1 (2016-01-01)

|

||||

++++++++++++++++++

|

||||

|

||||

- use embedded python if system python is broken, or doesn't output a version number

|

||||

- log output from wakatime-cli in ST console when in debug mode

|

||||

|

||||

|

||||

6.0.0 (2015-12-01)

|

||||

++++++++++++++++++

|

||||

|

||||

|

||||

@ -6,24 +6,37 @@

|

||||

"children":

|

||||

[

|

||||

{

|

||||

"caption": "WakaTime",

|

||||

"mnemonic": "W",

|

||||

"id": "wakatime-settings",

|

||||

"caption": "Package Settings",

|

||||

"mnemonic": "P",

|

||||

"id": "package-settings",

|

||||

"children":

|

||||

[

|

||||

{

|

||||

"command": "open_file", "args":

|

||||

{

|

||||

"file": "${packages}/WakaTime/WakaTime.sublime-settings"

|

||||

},

|

||||

"caption": "Settings – Default"

|

||||

},

|

||||

{

|

||||

"command": "open_file", "args":

|

||||

{

|

||||

"file": "${packages}/User/WakaTime.sublime-settings"

|

||||

},

|

||||

"caption": "Settings – User"

|

||||

"caption": "WakaTime",

|

||||

"mnemonic": "W",

|

||||

"id": "wakatime-settings",

|

||||

"children":

|

||||

[

|

||||

{

|

||||

"command": "open_file", "args":

|

||||

{

|

||||

"file": "${packages}/WakaTime/WakaTime.sublime-settings"

|

||||

},

|

||||

"caption": "Settings – Default"

|

||||

},

|

||||

{

|

||||

"command": "open_file", "args":

|

||||

{

|

||||

"file": "${packages}/User/WakaTime.sublime-settings"

|

||||

},

|

||||

"caption": "Settings – User"

|

||||

},

|

||||

{

|

||||

"command": "wakatime_dashboard",

|

||||

"args": {},

|

||||

"caption": "WakaTime Dashboard"

|

||||

}

|

||||

]

|

||||

}

|

||||

]

|

||||

}

|

||||

|

||||

23

README.md

23

README.md

@ -1,13 +1,12 @@

|

||||

sublime-wakatime

|

||||

================

|

||||

|

||||

Sublime Text 2 & 3 plugin to quantify your coding using https://wakatime.com/.

|

||||

Metrics, insights, and time tracking automatically generated from your programming activity.

|

||||

|

||||

|

||||

Installation

|

||||

------------

|

||||

|

||||

Heads Up! For Sublime Text 2 on Windows & Linux, WakaTime depends on [Python](http://www.python.org/getit/) being installed to work correctly.

|

||||

|

||||

1. Install [Package Control](https://packagecontrol.io/installation).

|

||||

|

||||

2. Using [Package Control](https://packagecontrol.io/docs/usage):

|

||||

@ -24,17 +23,31 @@ Heads Up! For Sublime Text 2 on Windows & Linux, WakaTime depends on [Python](ht

|

||||

|

||||

5. Visit https://wakatime.com/dashboard to see your logged time.

|

||||

|

||||

|

||||

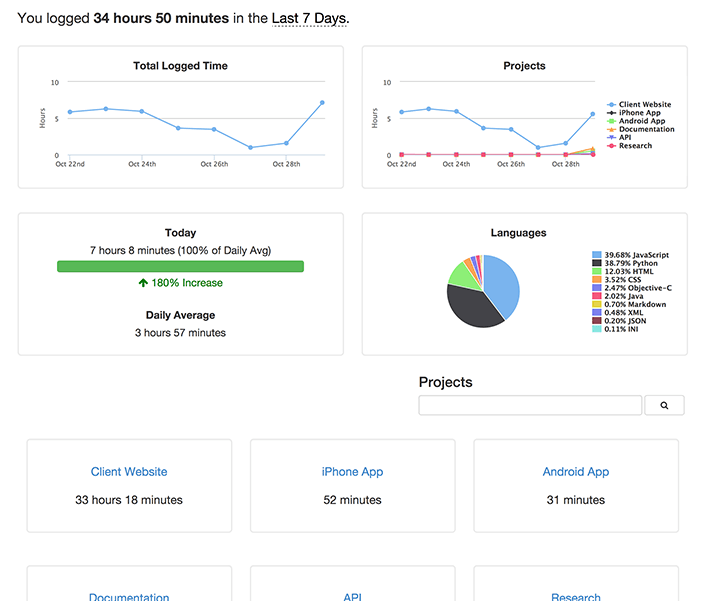

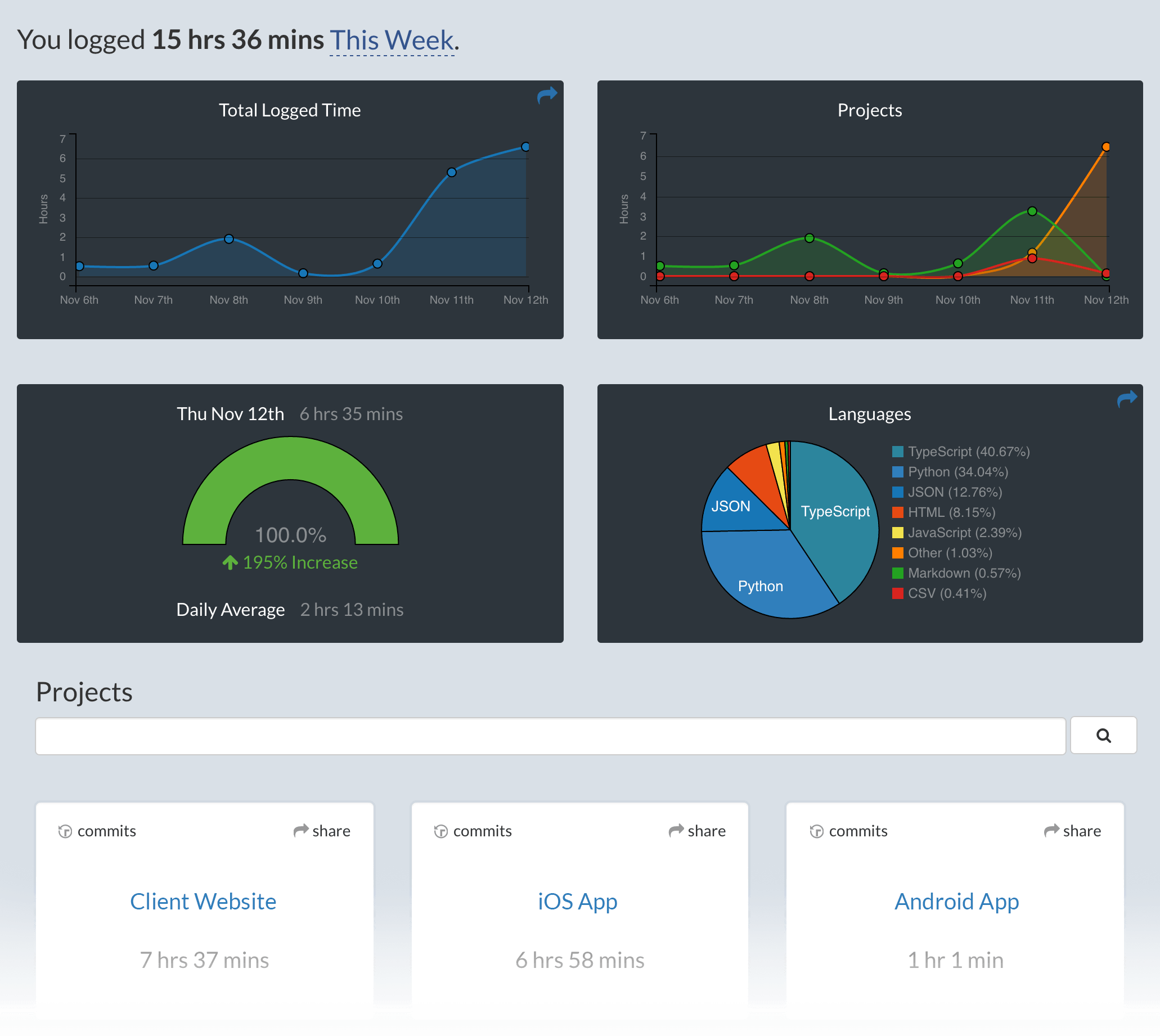

Screen Shots

|

||||

------------

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

Unresponsive Plugin Warning

|

||||

---------------------------

|

||||

|

||||

In Sublime Text 2, if you get a warning message:

|

||||

|

||||

A plugin (WakaTime) may be making Sublime Text unresponsive by taking too long (0.017332s) in its on_modified callback.

|

||||

|

||||

To fix this, go to `Preferences > Settings - User` then add the following setting:

|

||||

|

||||

`"detect_slow_plugins": false`

|

||||

|

||||

|

||||

Troubleshooting

|

||||

---------------

|

||||

|

||||

First, turn on debug mode in your `WakaTime.sublime-settings` file.

|

||||

|

||||

|

||||

|

||||

|

||||

Add the line: `"debug": true`

|

||||

|

||||

|

||||

386

WakaTime.py

386

WakaTime.py

@ -7,12 +7,13 @@ Website: https://wakatime.com/

|

||||

==========================================================="""

|

||||

|

||||

|

||||

__version__ = '6.0.0'

|

||||

__version__ = '7.0.10'

|

||||

|

||||

|

||||

import sublime

|

||||

import sublime_plugin

|

||||

|

||||

import json

|

||||

import os

|

||||

import platform

|

||||

import re

|

||||

@ -23,7 +24,7 @@ import urllib

|

||||

import webbrowser

|

||||

from datetime import datetime

|

||||

from zipfile import ZipFile

|

||||

from subprocess import Popen

|

||||

from subprocess import Popen, STDOUT, PIPE

|

||||

try:

|

||||

import _winreg as winreg # py2

|

||||

except ImportError:

|

||||

@ -31,6 +32,53 @@ except ImportError:

|

||||

import winreg # py3

|

||||

except ImportError:

|

||||

winreg = None

|

||||

try:

|

||||

import Queue as queue # py2

|

||||

except ImportError:

|

||||

import queue # py3

|

||||

|

||||

|

||||

is_py2 = (sys.version_info[0] == 2)

|

||||

is_py3 = (sys.version_info[0] == 3)

|

||||

|

||||

if is_py2:

|

||||

def u(text):

|

||||

if text is None:

|

||||

return None

|

||||

try:

|

||||

return text.decode('utf-8')

|

||||

except:

|

||||

try:

|

||||

return text.decode(sys.getdefaultencoding())

|

||||

except:

|

||||

try:

|

||||

return unicode(text)

|

||||

except:

|

||||

return text.decode('utf-8', 'replace')

|

||||

|

||||

elif is_py3:

|

||||

def u(text):

|

||||

if text is None:

|

||||

return None

|

||||

if isinstance(text, bytes):

|

||||

try:

|

||||

return text.decode('utf-8')

|

||||

except:

|

||||

try:

|

||||

return text.decode(sys.getdefaultencoding())

|

||||

except:

|

||||

pass

|

||||

try:

|

||||

return str(text)

|

||||

except:

|

||||

return text.decode('utf-8', 'replace')

|

||||

|

||||

else:

|

||||

raise Exception('Unsupported Python version: {0}.{1}.{2}'.format(

|

||||

sys.version_info[0],

|

||||

sys.version_info[1],

|

||||

sys.version_info[2],

|

||||

))

|

||||

|

||||

|

||||

# globals

|

||||

@ -45,8 +93,8 @@ LAST_HEARTBEAT = {

|

||||

'file': None,

|

||||

'is_write': False,

|

||||

}

|

||||

LOCK = threading.RLock()

|

||||

PYTHON_LOCATION = None

|

||||

HEARTBEATS = queue.Queue()

|

||||

|

||||

|

||||

# Log Levels

|

||||

@ -64,6 +112,20 @@ except ImportError:

|

||||

pass

|

||||

|

||||

|

||||

def set_timeout(callback, seconds):

|

||||

"""Runs the callback after the given seconds delay.

|

||||

|

||||

If this is Sublime Text 3, runs the callback on an alternate thread. If this

|

||||

is Sublime Text 2, runs the callback in the main thread.

|

||||

"""

|

||||

|

||||

milliseconds = int(seconds * 1000)

|

||||

try:

|

||||

sublime.set_timeout_async(callback, milliseconds)

|

||||

except AttributeError:

|

||||

sublime.set_timeout(callback, milliseconds)

|

||||

|

||||

|

||||

def log(lvl, message, *args, **kwargs):

|

||||

try:

|

||||

if lvl == DEBUG and not SETTINGS.get('debug'):

|

||||

@ -75,10 +137,34 @@ def log(lvl, message, *args, **kwargs):

|

||||

msg = message.format(**kwargs)

|

||||

print('[WakaTime] [{lvl}] {msg}'.format(lvl=lvl, msg=msg))

|

||||

except RuntimeError:

|

||||

sublime.set_timeout(lambda: log(lvl, message, *args, **kwargs), 0)

|

||||

set_timeout(lambda: log(lvl, message, *args, **kwargs), 0)

|

||||

|

||||

|

||||

def createConfigFile():

|

||||

def resources_folder():

|

||||

if platform.system() == 'Windows':

|

||||

return os.path.join(os.getenv('APPDATA'), 'WakaTime')

|

||||

else:

|

||||

return os.path.join(os.path.expanduser('~'), '.wakatime')

|

||||

|

||||

|

||||

def update_status_bar(status):

|

||||

"""Updates the status bar."""

|

||||

|

||||

try:

|

||||

if SETTINGS.get('status_bar_message'):

|

||||

msg = datetime.now().strftime(SETTINGS.get('status_bar_message_fmt'))

|

||||

if '{status}' in msg:

|

||||

msg = msg.format(status=status)

|

||||

|

||||

active_window = sublime.active_window()

|

||||

if active_window:

|

||||

for view in active_window.views():

|

||||

view.set_status('wakatime', msg)

|

||||

except RuntimeError:

|

||||

set_timeout(lambda: update_status_bar(status), 0)

|

||||

|

||||

|

||||

def create_config_file():

|

||||

"""Creates the .wakatime.cfg INI file in $HOME directory, if it does

|

||||

not already exist.

|

||||

"""

|

||||

@ -99,7 +185,7 @@ def createConfigFile():

|

||||

def prompt_api_key():

|

||||

global SETTINGS

|

||||

|

||||

createConfigFile()

|

||||

create_config_file()

|

||||

|

||||

default_key = ''

|

||||

try:

|

||||

@ -132,7 +218,7 @@ def python_binary():

|

||||

|

||||

# look for python in PATH and common install locations

|

||||

paths = [

|

||||

os.path.join(os.path.expanduser('~'), '.wakatime', 'python'),

|

||||

os.path.join(resources_folder(), 'python'),

|

||||

None,

|

||||

'/',

|

||||

'/usr/local/bin/',

|

||||

@ -160,7 +246,7 @@ def python_binary():

|

||||

def set_python_binary_location(path):

|

||||

global PYTHON_LOCATION

|

||||

PYTHON_LOCATION = path

|

||||

log(DEBUG, 'Python Binary Found: {0}'.format(path))

|

||||

log(DEBUG, 'Found Python at: {0}'.format(path))

|

||||

|

||||

|

||||

def find_python_from_registry(location, reg=None):

|

||||

@ -212,32 +298,39 @@ def find_python_from_registry(location, reg=None):

|

||||

sub_key=sub_key,

|

||||

))

|

||||

except WindowsError:

|

||||

if SETTINGS.get('debug'):

|

||||

log(DEBUG, 'Could not read registry value "{reg}\\{key}".'.format(

|

||||

reg=reg,

|

||||

key=location,

|

||||

))

|

||||

log(DEBUG, 'Could not read registry value "{reg}\\{key}".'.format(

|

||||

reg=reg,

|

||||

key=location,

|

||||

))

|

||||

|

||||

return val

|

||||

|

||||

|

||||

def find_python_in_folder(folder):

|

||||

path = 'pythonw'

|

||||

if folder is not None:

|

||||

path = os.path.realpath(os.path.join(folder, 'pythonw'))

|

||||

try:

|

||||

Popen([path, '--version'])

|

||||

return path

|

||||

except:

|

||||

pass

|

||||

def find_python_in_folder(folder, headless=True):

|

||||

pattern = re.compile(r'\d+\.\d+')

|

||||

|

||||

path = 'python'

|

||||

if folder is not None:

|

||||

path = os.path.realpath(os.path.join(folder, 'python'))

|

||||

if headless:

|

||||

path = u(path) + u('w')

|

||||

log(DEBUG, u('Looking for Python at: {0}').format(u(path)))

|

||||

try:

|

||||

Popen([path, '--version'])

|

||||

return path

|

||||

process = Popen([path, '--version'], stdout=PIPE, stderr=STDOUT)

|

||||

output, err = process.communicate()

|

||||

output = u(output).strip()

|

||||

retcode = process.poll()

|

||||

log(DEBUG, u('Python Version Output: {0}').format(output))

|

||||

if not retcode and pattern.search(output):

|

||||

return path

|

||||

except:

|

||||

pass

|

||||

log(DEBUG, u(sys.exc_info()[1]))

|

||||

|

||||

if headless:

|

||||

path = find_python_in_folder(folder, headless=False)

|

||||

if path is not None:

|

||||

return path

|

||||

|

||||

return None

|

||||

|

||||

|

||||

@ -253,10 +346,10 @@ def obfuscate_apikey(command_list):

|

||||

return cmd

|

||||

|

||||

|

||||

def enough_time_passed(now, last_heartbeat, is_write):

|

||||

if now - last_heartbeat['time'] > HEARTBEAT_FREQUENCY * 60:

|

||||

def enough_time_passed(now, is_write):

|

||||

if now - LAST_HEARTBEAT['time'] > HEARTBEAT_FREQUENCY * 60:

|

||||

return True

|

||||

if is_write and now - last_heartbeat['time'] > 2:

|

||||

if is_write and now - LAST_HEARTBEAT['time'] > 2:

|

||||

return True

|

||||

return False

|

||||

|

||||

@ -300,95 +393,176 @@ def is_view_active(view):

|

||||

return False

|

||||

|

||||

|

||||

def handle_heartbeat(view, is_write=False):

|

||||

def handle_activity(view, is_write=False):

|

||||

window = view.window()

|

||||

if window is not None:

|

||||

target_file = view.file_name()

|

||||

project = window.project_data() if hasattr(window, 'project_data') else None

|

||||

folders = window.folders()

|

||||

thread = SendHeartbeatThread(target_file, view, is_write=is_write, project=project, folders=folders)

|

||||

thread.start()

|

||||

entity = view.file_name()

|

||||

if entity:

|

||||

timestamp = time.time()

|

||||

last_file = LAST_HEARTBEAT['file']

|

||||

if entity != last_file or enough_time_passed(timestamp, is_write):

|

||||

project = window.project_data() if hasattr(window, 'project_data') else None

|

||||

folders = window.folders()

|

||||

append_heartbeat(entity, timestamp, is_write, view, project, folders)

|

||||

|

||||

|

||||

class SendHeartbeatThread(threading.Thread):

|

||||

def append_heartbeat(entity, timestamp, is_write, view, project, folders):

|

||||

global LAST_HEARTBEAT

|

||||

|

||||

# add this heartbeat to queue

|

||||

heartbeat = {

|

||||

'entity': entity,

|

||||

'timestamp': timestamp,

|

||||

'is_write': is_write,

|

||||

'cursorpos': view.sel()[0].begin() if view.sel() else None,

|

||||

'project': project,

|

||||

'folders': folders,

|

||||

}

|

||||

HEARTBEATS.put_nowait(heartbeat)

|

||||

|

||||

# make this heartbeat the LAST_HEARTBEAT

|

||||

LAST_HEARTBEAT = {

|

||||

'file': entity,

|

||||

'time': timestamp,

|

||||

'is_write': is_write,

|

||||

}

|

||||

|

||||

# process the queue of heartbeats in the future

|

||||

seconds = 4

|

||||

set_timeout(process_queue, seconds)

|

||||

|

||||

|

||||

def process_queue():

|

||||

try:

|

||||

heartbeat = HEARTBEATS.get_nowait()

|

||||

except queue.Empty:

|

||||

return

|

||||

|

||||

has_extra_heartbeats = False

|

||||

extra_heartbeats = []

|

||||

try:

|

||||

while True:

|

||||

extra_heartbeats.append(HEARTBEATS.get_nowait())

|

||||

has_extra_heartbeats = True

|

||||

except queue.Empty:

|

||||

pass

|

||||

|

||||

thread = SendHeartbeatsThread(heartbeat)

|

||||

if has_extra_heartbeats:

|

||||

thread.add_extra_heartbeats(extra_heartbeats)

|

||||

thread.start()

|

||||

|

||||

|

||||

class SendHeartbeatsThread(threading.Thread):

|

||||

"""Non-blocking thread for sending heartbeats to api.

|

||||

"""

|

||||

|

||||

def __init__(self, target_file, view, is_write=False, project=None, folders=None, force=False):

|

||||

def __init__(self, heartbeat):

|

||||

threading.Thread.__init__(self)

|

||||

self.lock = LOCK

|

||||

self.target_file = target_file

|

||||

self.is_write = is_write

|

||||

self.project = project

|

||||

self.folders = folders

|

||||

self.force = force

|

||||

|

||||

self.debug = SETTINGS.get('debug')

|

||||

self.api_key = SETTINGS.get('api_key', '')

|

||||

self.ignore = SETTINGS.get('ignore', [])

|

||||

self.last_heartbeat = LAST_HEARTBEAT.copy()

|

||||

self.cursorpos = view.sel()[0].begin() if view.sel() else None

|

||||

self.view = view

|

||||

|

||||

self.heartbeat = heartbeat

|

||||

self.has_extra_heartbeats = False

|

||||

|

||||

def add_extra_heartbeats(self, extra_heartbeats):

|

||||

self.has_extra_heartbeats = True

|

||||

self.extra_heartbeats = extra_heartbeats

|

||||

|

||||

def run(self):

|

||||

with self.lock:

|

||||

if self.target_file:

|

||||

self.timestamp = time.time()

|

||||

if self.force or self.target_file != self.last_heartbeat['file'] or enough_time_passed(self.timestamp, self.last_heartbeat, self.is_write):

|

||||

self.send_heartbeat()

|

||||

"""Running in background thread."""

|

||||

|

||||

def send_heartbeat(self):

|

||||

if not self.api_key:

|

||||

log(ERROR, 'missing api key.')

|

||||

return

|

||||

ua = 'sublime/%d sublime-wakatime/%s' % (ST_VERSION, __version__)

|

||||

cmd = [

|

||||

API_CLIENT,

|

||||

'--file', self.target_file,

|

||||

'--time', str('%f' % self.timestamp),

|

||||

'--plugin', ua,

|

||||

'--key', str(bytes.decode(self.api_key.encode('utf8'))),

|

||||

]

|

||||

if self.is_write:

|

||||

cmd.append('--write')

|

||||

if self.project and self.project.get('name'):

|

||||

cmd.extend(['--alternate-project', self.project.get('name')])

|

||||

elif self.folders:

|

||||

project_name = find_project_from_folders(self.folders, self.target_file)

|

||||

self.send_heartbeats()

|

||||

|

||||

def build_heartbeat(self, entity=None, timestamp=None, is_write=None,

|

||||

cursorpos=None, project=None, folders=None):

|

||||

"""Returns a dict for passing to wakatime-cli as arguments."""

|

||||

|

||||

heartbeat = {

|

||||

'entity': entity,

|

||||

'timestamp': timestamp,

|

||||

'is_write': is_write,

|

||||

}

|

||||

|

||||

if project and project.get('name'):

|

||||

heartbeat['alternate_project'] = project.get('name')

|

||||

elif folders:

|

||||

project_name = find_project_from_folders(folders, entity)

|

||||

if project_name:

|

||||

cmd.extend(['--alternate-project', project_name])

|

||||

if self.cursorpos is not None:

|

||||

cmd.extend(['--cursorpos', '{0}'.format(self.cursorpos)])

|

||||

for pattern in self.ignore:

|

||||

cmd.extend(['--ignore', pattern])

|

||||

if self.debug:

|

||||

cmd.append('--verbose')

|

||||

heartbeat['alternate_project'] = project_name

|

||||

|

||||

if cursorpos is not None:

|

||||

heartbeat['cursorpos'] = '{0}'.format(cursorpos)

|

||||

|

||||

return heartbeat

|

||||

|

||||

def send_heartbeats(self):

|

||||

if python_binary():

|

||||

cmd.insert(0, python_binary())

|

||||

log(DEBUG, ' '.join(obfuscate_apikey(cmd)))

|

||||

if platform.system() == 'Windows':

|

||||

Popen(cmd, shell=False)

|

||||

heartbeat = self.build_heartbeat(**self.heartbeat)

|

||||

ua = 'sublime/%d sublime-wakatime/%s' % (ST_VERSION, __version__)

|

||||

cmd = [

|

||||

python_binary(),

|

||||

API_CLIENT,

|

||||

'--entity', heartbeat['entity'],

|

||||

'--time', str('%f' % heartbeat['timestamp']),

|

||||

'--plugin', ua,

|

||||

]

|

||||

if self.api_key:

|

||||

cmd.extend(['--key', str(bytes.decode(self.api_key.encode('utf8')))])

|

||||

if heartbeat['is_write']:

|

||||

cmd.append('--write')

|

||||

if heartbeat.get('alternate_project'):

|

||||

cmd.extend(['--alternate-project', heartbeat['alternate_project']])

|

||||

if heartbeat.get('cursorpos') is not None:

|

||||

cmd.extend(['--cursorpos', heartbeat['cursorpos']])

|

||||

for pattern in self.ignore:

|

||||

cmd.extend(['--ignore', pattern])

|

||||

if self.debug:

|

||||

cmd.append('--verbose')

|

||||

if self.has_extra_heartbeats:

|

||||

cmd.append('--extra-heartbeats')

|

||||

stdin = PIPE

|

||||

extra_heartbeats = [self.build_heartbeat(**x) for x in self.extra_heartbeats]

|

||||

extra_heartbeats = json.dumps(extra_heartbeats)

|

||||

else:

|

||||

with open(os.path.join(os.path.expanduser('~'), '.wakatime.log'), 'a') as stderr:

|

||||

Popen(cmd, stderr=stderr)

|

||||

self.sent()

|

||||

extra_heartbeats = None

|

||||

stdin = None

|

||||

|

||||

log(DEBUG, ' '.join(obfuscate_apikey(cmd)))

|

||||

try:

|

||||

process = Popen(cmd, stdin=stdin, stdout=PIPE, stderr=STDOUT)

|

||||

inp = None

|

||||

if self.has_extra_heartbeats:

|

||||

inp = "{0}\n".format(extra_heartbeats)

|

||||

inp = inp.encode('utf-8')

|

||||

output, err = process.communicate(input=inp)

|

||||

output = u(output)

|

||||

retcode = process.poll()

|

||||

if (not retcode or retcode == 102) and not output:

|

||||

self.sent()

|

||||

else:

|

||||

update_status_bar('Error')

|

||||

if retcode:

|

||||

log(DEBUG if retcode == 102 else ERROR, 'wakatime-core exited with status: {0}'.format(retcode))

|

||||

if output:

|

||||

log(ERROR, u('wakatime-core output: {0}').format(output))

|

||||

except:

|

||||

log(ERROR, u(sys.exc_info()[1]))

|

||||

update_status_bar('Error')

|

||||

|

||||

else:

|

||||

log(ERROR, 'Unable to find python binary.')

|

||||

update_status_bar('Error')

|

||||

|

||||

def sent(self):

|

||||

sublime.set_timeout(self.set_status_bar, 0)

|

||||

sublime.set_timeout(self.set_last_heartbeat, 0)

|

||||

update_status_bar('OK')

|

||||

|

||||

def set_status_bar(self):

|

||||

if SETTINGS.get('status_bar_message'):

|

||||

self.view.set_status('wakatime', datetime.now().strftime(SETTINGS.get('status_bar_message_fmt')))

|

||||

|

||||

def set_last_heartbeat(self):

|

||||

global LAST_HEARTBEAT

|

||||

LAST_HEARTBEAT = {

|

||||

'file': self.target_file,

|

||||

'time': self.timestamp,

|

||||

'is_write': self.is_write,

|

||||

}

|

||||

def download_python():

|

||||

thread = DownloadPython()

|

||||

thread.start()

|

||||

|

||||

|

||||

class DownloadPython(threading.Thread):

|

||||

@ -405,10 +579,10 @@ class DownloadPython(threading.Thread):

|

||||

arch=arch,

|

||||

)

|

||||

|

||||

if not os.path.exists(os.path.join(os.path.expanduser('~'), '.wakatime')):

|

||||

os.makedirs(os.path.join(os.path.expanduser('~'), '.wakatime'))

|

||||

if not os.path.exists(resources_folder()):

|

||||

os.makedirs(resources_folder())

|

||||

|

||||

zip_file = os.path.join(os.path.expanduser('~'), '.wakatime', 'python.zip')

|

||||

zip_file = os.path.join(resources_folder(), 'python.zip')

|

||||

try:

|

||||

urllib.urlretrieve(url, zip_file)

|

||||

except AttributeError:

|

||||

@ -416,7 +590,7 @@ class DownloadPython(threading.Thread):

|

||||

|

||||

log(INFO, 'Extracting Python...')

|

||||

with ZipFile(zip_file) as zf:

|

||||

path = os.path.join(os.path.expanduser('~'), '.wakatime', 'python')

|

||||

path = os.path.join(resources_folder(), 'python')

|

||||

zf.extractall(path)

|

||||

|

||||

try:

|

||||

@ -429,15 +603,15 @@ class DownloadPython(threading.Thread):

|

||||

|

||||

def plugin_loaded():

|

||||

global SETTINGS

|

||||

log(INFO, 'Initializing WakaTime plugin v%s' % __version__)

|

||||

|

||||

SETTINGS = sublime.load_settings(SETTINGS_FILE)

|

||||

|

||||

log(INFO, 'Initializing WakaTime plugin v%s' % __version__)

|

||||

update_status_bar('Initializing')

|

||||

|

||||

if not python_binary():

|

||||

log(WARNING, 'Python binary not found.')

|

||||

if platform.system() == 'Windows':

|

||||

thread = DownloadPython()

|

||||

thread.start()

|

||||

set_timeout(download_python, 0)

|

||||

else:

|

||||

sublime.error_message("Unable to find Python binary!\nWakaTime needs Python to work correctly.\n\nGo to https://www.python.org/downloads")

|

||||

return

|

||||

@ -447,7 +621,7 @@ def plugin_loaded():

|

||||

|

||||

def after_loaded():

|

||||

if not prompt_api_key():

|

||||

sublime.set_timeout(after_loaded, 500)

|

||||

set_timeout(after_loaded, 0.5)

|

||||

|

||||

|

||||

# need to call plugin_loaded because only ST3 will auto-call it

|

||||

@ -458,15 +632,15 @@ if ST_VERSION < 3000:

|

||||

class WakatimeListener(sublime_plugin.EventListener):

|

||||

|

||||

def on_post_save(self, view):

|

||||

handle_heartbeat(view, is_write=True)

|

||||

handle_activity(view, is_write=True)

|

||||

|

||||

def on_selection_modified(self, view):

|

||||

if is_view_active(view):

|

||||

handle_heartbeat(view)

|

||||

handle_activity(view)

|

||||

|

||||

def on_modified(self, view):

|

||||

if is_view_active(view):

|

||||

handle_heartbeat(view)

|

||||

handle_activity(view)

|

||||

|

||||

|

||||

class WakatimeDashboardCommand(sublime_plugin.ApplicationCommand):

|

||||

|

||||

@ -19,5 +19,5 @@

|

||||

"status_bar_message": true,

|

||||

|

||||

// Status bar message format.

|

||||

"status_bar_message_fmt": "WakaTime active %I:%M %p"

|

||||

"status_bar_message_fmt": "WakaTime {status} %I:%M %p"

|

||||

}

|

||||

|

||||

@ -1,9 +1,9 @@

|

||||

__title__ = 'wakatime'

|

||||

__description__ = 'Common interface to the WakaTime api.'

|

||||

__url__ = 'https://github.com/wakatime/wakatime'

|

||||

__version_info__ = ('4', '1', '8')

|

||||

__version_info__ = ('6', '0', '9')

|

||||

__version__ = '.'.join(__version_info__)

|

||||

__author__ = 'Alan Hamlett'

|

||||

__author_email__ = 'alan@wakatime.com'

|

||||

__license__ = 'BSD'

|

||||

__copyright__ = 'Copyright 2014 Alan Hamlett'

|

||||

__copyright__ = 'Copyright 2016 Alan Hamlett'

|

||||

|

||||

@ -31,7 +31,7 @@ if is_py2: # pragma: nocover

|

||||

try:

|

||||

return unicode(text)

|

||||

except:

|

||||

return text

|

||||

return text.decode('utf-8', 'replace')

|

||||

open = codecs.open

|

||||

basestring = basestring

|

||||

|

||||

@ -52,7 +52,7 @@ elif is_py3: # pragma: nocover

|

||||

try:

|

||||

return str(text)

|

||||

except:

|

||||

return text

|

||||

return text.decode('utf-8', 'replace')

|

||||

open = open

|

||||

basestring = (str, bytes)

|

||||

|

||||

|

||||

40

packages/wakatime/constants.py

Normal file

40

packages/wakatime/constants.py

Normal file

@ -0,0 +1,40 @@

|

||||

# -*- coding: utf-8 -*-

|

||||

"""

|

||||

wakatime.constants

|

||||

~~~~~~~~~~~~~~~~~~

|

||||

|

||||

Constant variable definitions.

|

||||

|

||||

:copyright: (c) 2016 Alan Hamlett.

|

||||

:license: BSD, see LICENSE for more details.

|

||||

"""

|

||||

|

||||

""" Success

|

||||

Exit code used when a heartbeat was sent successfully.

|

||||

"""

|

||||

SUCCESS = 0

|

||||

|

||||

""" Api Error

|

||||

Exit code used when the WakaTime API returned an error.

|

||||

"""

|

||||

API_ERROR = 102

|

||||

|

||||

""" Config File Parse Error

|

||||

Exit code used when the ~/.wakatime.cfg config file could not be parsed.

|

||||

"""

|

||||

CONFIG_FILE_PARSE_ERROR = 103

|

||||

|

||||

""" Auth Error

|

||||

Exit code used when our api key is invalid.

|

||||

"""

|

||||

AUTH_ERROR = 104

|

||||

|

||||

""" Unknown Error

|

||||

Exit code used when there was an unhandled exception.

|

||||

"""

|

||||

UNKNOWN_ERROR = 105

|

||||

|

||||

""" Malformed Heartbeat Error

|

||||

Exit code used when the JSON input from `--extra-heartbeats` is malformed.

|

||||

"""

|

||||

MALFORMED_HEARTBEAT_ERROR = 106

|

||||

@ -12,7 +12,6 @@

|

||||

import logging

|

||||

import re

|

||||

import sys

|

||||

import traceback

|

||||

|

||||

from ..compat import u, open, import_module

|

||||

from ..exceptions import NotYetImplemented

|

||||

@ -68,7 +67,7 @@ class TokenParser(object):

|

||||

pass

|

||||

try:

|

||||

with open(self.source_file, 'r', encoding=sys.getfilesystemencoding()) as fh:

|

||||

return self.lexer.get_tokens_unprocessed(fh.read(512000))

|

||||

return self.lexer.get_tokens_unprocessed(fh.read(512000)) # pragma: nocover

|

||||

except:

|

||||

pass

|

||||

return []

|

||||

@ -120,7 +119,7 @@ class DependencyParser(object):

|

||||

except AttributeError:

|

||||

log.debug('Module {0} is missing class {1}'.format(module.__name__, class_name))

|

||||

except ImportError:

|

||||

log.debug(traceback.format_exc())

|

||||

log.traceback(logging.DEBUG)

|

||||

|

||||

def parse(self):

|

||||

if self.parser:

|

||||

|

||||

@ -12,34 +12,6 @@

|

||||

from . import TokenParser

|

||||

|

||||

|

||||

class CppParser(TokenParser):

|

||||

exclude = [

|

||||

r'^stdio\.h$',

|

||||

r'^stdlib\.h$',

|

||||

r'^string\.h$',

|

||||

r'^time\.h$',

|

||||

]

|

||||

|

||||

def parse(self):

|

||||

for index, token, content in self.tokens:

|

||||

self._process_token(token, content)

|

||||

return self.dependencies

|

||||

|

||||

def _process_token(self, token, content):

|

||||

if self.partial(token) == 'Preproc':

|

||||

self._process_preproc(token, content)

|

||||

else:

|

||||

self._process_other(token, content)

|

||||

|

||||

def _process_preproc(self, token, content):

|

||||

if content.strip().startswith('include ') or content.strip().startswith("include\t"):

|

||||

content = content.replace('include', '', 1).strip().strip('"').strip('<').strip('>').strip()

|

||||

self.append(content)

|

||||

|

||||

def _process_other(self, token, content):

|

||||

pass

|

||||

|

||||

|

||||

class CParser(TokenParser):

|

||||

exclude = [

|

||||

r'^stdio\.h$',

|

||||

@ -47,6 +19,7 @@ class CParser(TokenParser):

|

||||

r'^string\.h$',

|

||||

r'^time\.h$',

|

||||

]

|

||||

state = None

|

||||

|

||||

def parse(self):

|

||||

for index, token, content in self.tokens:

|

||||

@ -54,15 +27,25 @@ class CParser(TokenParser):

|

||||

return self.dependencies

|

||||

|

||||

def _process_token(self, token, content):

|

||||

if self.partial(token) == 'Preproc':

|

||||

if self.partial(token) == 'Preproc' or self.partial(token) == 'PreprocFile':

|

||||

self._process_preproc(token, content)

|

||||

else:

|

||||

self._process_other(token, content)

|

||||

|

||||

def _process_preproc(self, token, content):

|

||||

if content.strip().startswith('include ') or content.strip().startswith("include\t"):

|

||||

content = content.replace('include', '', 1).strip().strip('"').strip('<').strip('>').strip()

|

||||

self.append(content)

|

||||

if self.state == 'include':

|

||||

if content != '\n' and content != '#':

|

||||

content = content.strip().strip('"').strip('<').strip('>').strip()

|

||||

self.append(content, truncate=True, separator='/')

|

||||

self.state = None

|

||||

elif content.strip().startswith('include'):

|

||||

self.state = 'include'

|

||||

else:

|

||||

self.state = None

|

||||

|

||||

def _process_other(self, token, content):

|

||||

pass

|

||||

|

||||

|

||||

class CppParser(CParser):

|

||||

pass

|

||||

|

||||

77

packages/wakatime/dependencies/go.py

Normal file

77

packages/wakatime/dependencies/go.py

Normal file

@ -0,0 +1,77 @@

|

||||

# -*- coding: utf-8 -*-

|

||||

"""

|

||||

wakatime.languages.go

|

||||

~~~~~~~~~~~~~~~~~~~~~

|

||||

|

||||

Parse dependencies from Go code.

|

||||

|

||||

:copyright: (c) 2016 Alan Hamlett.

|

||||

:license: BSD, see LICENSE for more details.

|

||||

"""

|

||||

|

||||

from . import TokenParser

|

||||

|

||||

|

||||

class GoParser(TokenParser):

|

||||

state = None

|

||||

parens = 0

|

||||

aliases = 0

|

||||

exclude = [

|

||||

r'^"fmt"$',

|

||||

]

|

||||

|

||||

def parse(self):

|

||||

for index, token, content in self.tokens:

|

||||

self._process_token(token, content)

|

||||

return self.dependencies

|

||||

|

||||

def _process_token(self, token, content):

|

||||

if self.partial(token) == 'Namespace':

|

||||

self._process_namespace(token, content)

|

||||

elif self.partial(token) == 'Punctuation':

|

||||

self._process_punctuation(token, content)

|

||||

elif self.partial(token) == 'String':

|

||||

self._process_string(token, content)

|

||||

elif self.partial(token) == 'Text':

|

||||

self._process_text(token, content)

|

||||

elif self.partial(token) == 'Other':

|

||||

self._process_other(token, content)

|

||||

else:

|

||||

self._process_misc(token, content)

|

||||

|

||||

def _process_namespace(self, token, content):

|

||||

self.state = content

|

||||

self.parens = 0

|

||||

self.aliases = 0

|

||||

|

||||

def _process_string(self, token, content):

|

||||

if self.state == 'import':

|

||||

self.append(content, truncate=False)

|

||||

|

||||

def _process_punctuation(self, token, content):

|

||||

if content == '(':

|

||||

self.parens += 1

|

||||

elif content == ')':

|

||||

self.parens -= 1

|

||||

elif content == '.':

|

||||

self.aliases += 1

|

||||

else:

|

||||

self.state = None

|

||||

|

||||

def _process_text(self, token, content):

|

||||

if self.state == 'import':

|

||||

if content == "\n" and self.parens <= 0:

|

||||

self.state = None

|

||||

self.parens = 0

|

||||

self.aliases = 0

|

||||

else:

|

||||

self.state = None

|

||||

|

||||

def _process_other(self, token, content):

|

||||

if self.state == 'import':

|

||||

self.aliases += 1

|

||||

else:

|

||||

self.state = None

|

||||

|

||||

def _process_misc(self, token, content):

|

||||

self.state = None

|

||||

@ -18,7 +18,9 @@ class PythonParser(TokenParser):

|

||||

nonpackage = False

|

||||

exclude = [

|

||||

r'^os$',

|

||||

r'^sys$',

|

||||

r'^sys\.',

|

||||

r'^__future__$',

|

||||

]

|

||||

|

||||

def parse(self):

|

||||

@ -48,9 +50,7 @@ class PythonParser(TokenParser):

|

||||

self._process_import(token, content)

|

||||

|

||||

def _process_operator(self, token, content):

|

||||

if self.state is not None:

|

||||

if content == '.':

|

||||

self.nonpackage = True

|

||||

pass

|

||||

|

||||

def _process_punctuation(self, token, content):

|

||||

if content == '(':

|

||||

@ -73,8 +73,6 @@ class PythonParser(TokenParser):

|

||||

if self.state == 'from':

|

||||

self.append(content, truncate=True, truncate_to=1)

|

||||

self.state = 'from-2'

|

||||

elif self.state == 'from-2' and content != 'import':

|

||||

self.append(content, truncate=True, truncate_to=1)

|

||||

elif self.state == 'import':

|

||||

self.append(content, truncate=True, truncate_to=1)

|

||||

self.state = 'import-2'

|

||||

|

||||

@ -69,30 +69,9 @@ KEYWORDS = [

|

||||

]

|

||||

|

||||

|

||||

class LassoJavascriptParser(TokenParser):

|

||||

|

||||

def parse(self):

|

||||

for index, token, content in self.tokens:

|

||||

self._process_token(token, content)

|

||||

return self.dependencies

|

||||

|

||||

def _process_token(self, token, content):

|

||||

if u(token) == 'Token.Name.Other':

|

||||

self._process_name(token, content)

|

||||

elif u(token) == 'Token.Literal.String.Single' or u(token) == 'Token.Literal.String.Double':

|

||||

self._process_literal_string(token, content)

|

||||

|

||||

def _process_name(self, token, content):

|

||||

if content.lower() in KEYWORDS:

|

||||

self.append(content.lower())

|

||||

|

||||

def _process_literal_string(self, token, content):

|

||||

if 'famous/core/' in content.strip('"').strip("'"):

|

||||

self.append('famous')

|

||||

|

||||

|

||||

class HtmlDjangoParser(TokenParser):

|

||||

tags = []

|

||||

opening_tag = False

|

||||

getting_attrs = False

|

||||

current_attr = None

|

||||

current_attr_value = None

|

||||

@ -103,7 +82,9 @@ class HtmlDjangoParser(TokenParser):

|

||||

return self.dependencies

|

||||

|

||||

def _process_token(self, token, content):

|

||||

if u(token) == 'Token.Name.Tag':

|

||||

if u(token) == 'Token.Punctuation':

|

||||

self._process_punctuation(token, content)

|

||||

elif u(token) == 'Token.Name.Tag':

|

||||

self._process_tag(token, content)

|

||||

elif u(token) == 'Token.Literal.String':

|

||||

self._process_string(token, content)

|

||||

@ -114,26 +95,30 @@ class HtmlDjangoParser(TokenParser):

|

||||

def current_tag(self):

|

||||

return None if len(self.tags) == 0 else self.tags[0]

|

||||

|

||||

def _process_tag(self, token, content):

|

||||

def _process_punctuation(self, token, content):

|

||||

if content.startswith('</') or content.startswith('/'):

|

||||

try:

|

||||

self.tags.pop(0)

|

||||

except IndexError:

|

||||

# ignore errors from malformed markup

|

||||

pass

|

||||

self.opening_tag = False

|

||||

self.getting_attrs = False

|

||||

elif content.startswith('<'):

|

||||

self.opening_tag = True

|

||||

elif content.startswith('>'):

|

||||

self.opening_tag = False

|

||||

self.getting_attrs = False

|

||||

|

||||

def _process_tag(self, token, content):

|

||||

if self.opening_tag:

|

||||

self.tags.insert(0, content.replace('<', '', 1).strip().lower())

|

||||

self.getting_attrs = True

|

||||

elif content.startswith('>'):

|

||||

self.getting_attrs = False

|

||||

self.current_attr = None

|

||||

|

||||

def _process_attribute(self, token, content):

|

||||

if self.getting_attrs:

|

||||

self.current_attr = content.lower().strip('=')

|

||||

else:

|

||||

self.current_attr = None

|

||||

self.current_attr_value = None

|

||||

|

||||

def _process_string(self, token, content):

|

||||

@ -156,8 +141,6 @@ class HtmlDjangoParser(TokenParser):

|

||||

elif content.startswith('"') or content.startswith("'"):

|

||||

if self.current_attr_value is None:

|

||||

self.current_attr_value = content

|

||||

else:

|

||||

self.current_attr_value += content

|

||||

|

||||

|

||||

class VelocityHtmlParser(HtmlDjangoParser):

|

||||

|

||||

80

packages/wakatime/languages/default.json

Normal file

80

packages/wakatime/languages/default.json

Normal file

@ -0,0 +1,80 @@

|

||||

{

|

||||

"ActionScript": "ActionScript",

|

||||

"ApacheConf": "ApacheConf",

|

||||

"AppleScript": "AppleScript",

|

||||

"ASP": "ASP",

|

||||

"Assembly": "Assembly",

|

||||

"Awk": "Awk",

|

||||

"Bash": "Bash",

|

||||

"Basic": "Basic",

|

||||

"BrightScript": "BrightScript",

|

||||

"C": "C",

|

||||

"C#": "C#",

|

||||

"C++": "C++",

|

||||

"Clojure": "Clojure",

|

||||

"Cocoa": "Cocoa",

|

||||

"CoffeeScript": "CoffeeScript",

|

||||

"ColdFusion": "ColdFusion",

|

||||

"Common Lisp": "Common Lisp",

|

||||

"CSHTML": "CSHTML",

|

||||

"CSS": "CSS",

|

||||

"Dart": "Dart",

|

||||

"Delphi": "Delphi",

|

||||

"Elixir": "Elixir",

|

||||

"Elm": "Elm",

|

||||

"Emacs Lisp": "Emacs Lisp",

|

||||

"Erlang": "Erlang",

|

||||

"F#": "F#",

|

||||

"Fortran": "Fortran",

|

||||

"Go": "Go",

|

||||

"Gous": "Gosu",

|

||||

"Groovy": "Groovy",

|

||||

"Haml": "Haml",

|

||||

"HaXe": "HaXe",

|

||||

"Haskell": "Haskell",

|

||||

"HTML": "HTML",

|

||||

"INI": "INI",

|

||||

"Jade": "Jade",

|

||||

"Java": "Java",

|

||||

"JavaScript": "JavaScript",

|

||||

"JSON": "JSON",

|

||||

"JSX": "JSX",

|

||||

"Kotlin": "Kotlin",

|

||||

"LESS": "LESS",

|

||||

"Lua": "Lua",

|

||||

"Markdown": "Markdown",

|

||||

"Matlab": "Matlab",

|

||||

"Mustache": "Mustache",

|

||||

"OCaml": "OCaml",

|

||||

"Objective-C": "Objective-C",

|

||||

"Objective-C++": "Objective-C++",

|

||||

"Objective-J": "Objective-J",

|

||||

"Perl": "Perl",

|

||||

"PHP": "PHP",

|

||||

"PowerShell": "PowerShell",

|

||||

"Prolog": "Prolog",

|

||||

"Puppet": "Puppet",

|

||||

"Python": "Python",

|

||||

"R": "R",

|

||||

"reStructuredText": "reStructuredText",

|

||||

"Ruby": "Ruby",

|

||||

"Rust": "Rust",

|

||||

"Sass": "Sass",

|

||||

"Scala": "Scala",

|

||||

"Scheme": "Scheme",

|

||||

"SCSS": "SCSS",

|

||||

"Shell": "Shell",

|

||||

"Slim": "Slim",

|

||||

"Smalltalk": "Smalltalk",

|

||||

"SQL": "SQL",

|

||||

"Swift": "Swift",

|

||||

"Text": "Text",

|

||||

"Turing": "Turing",

|

||||

"Twig": "Twig",

|

||||

"TypeScript": "TypeScript",

|

||||

"VB.net": "VB.net",

|

||||

"VimL": "VimL",

|

||||

"XAML": "XAML",

|

||||

"XML": "XML",

|

||||

"YAML": "YAML"

|

||||

}

|

||||

531

packages/wakatime/languages/vim.json

Normal file

531

packages/wakatime/languages/vim.json

Normal file

@ -0,0 +1,531 @@

|

||||

{

|

||||

"a2ps": null,

|

||||

"a65": "Assembly",

|

||||

"aap": null,

|

||||

"abap": null,

|

||||

"abaqus": null,

|

||||

"abc": null,

|

||||

"abel": null,

|

||||

"acedb": null,

|

||||

"ada": null,

|

||||

"aflex": null,

|

||||

"ahdl": null,

|

||||

"alsaconf": null,

|

||||

"amiga": null,

|

||||

"aml": null,

|

||||

"ampl": null,

|

||||

"ant": null,

|

||||

"antlr": null,

|

||||

"apache": null,

|

||||

"apachestyle": null,

|

||||

"arch": null,

|

||||

"art": null,

|

||||

"asm": "Assembly",

|

||||

"asm68k": "Assembly",

|

||||

"asmh8300": "Assembly",

|

||||

"asn": null,

|

||||

"aspperl": null,

|

||||

"aspvbs": null,

|

||||

"asterisk": null,

|

||||

"asteriskvm": null,

|

||||

"atlas": null,

|

||||

"autohotkey": null,

|

||||

"autoit": null,

|

||||

"automake": null,

|

||||

"ave": null,

|

||||

"awk": null,

|

||||

"ayacc": null,

|

||||

"b": null,

|

||||

"baan": null,

|

||||

"basic": "Basic",

|

||||

"bc": null,

|

||||

"bdf": null,

|

||||

"bib": null,

|

||||

"bindzone": null,

|

||||

"blank": null,

|

||||

"bst": null,

|

||||

"btm": null,

|

||||

"bzr": null,

|

||||

"c": "C",

|

||||

"cabal": null,

|

||||

"calendar": null,

|

||||

"catalog": null,

|

||||

"cdl": null,

|

||||

"cdrdaoconf": null,

|

||||

"cdrtoc": null,

|

||||

"cf": null,

|

||||

"cfg": null,

|

||||

"ch": null,

|

||||

"chaiscript": null,

|

||||

"change": null,

|

||||

"changelog": null,

|

||||

"chaskell": null,

|

||||

"cheetah": null,

|

||||

"chill": null,

|

||||

"chordpro": null,

|

||||

"cl": null,

|

||||

"clean": null,

|

||||

"clipper": null,

|

||||

"cmake": null,

|

||||

"cmusrc": null,

|

||||

"cobol": null,

|

||||

"coco": null,

|

||||

"conaryrecipe": null,

|

||||

"conf": null,

|

||||

"config": null,

|

||||

"context": null,

|

||||

"cpp": "C++",

|

||||

"crm": null,

|

||||

"crontab": "Crontab",

|

||||

"cs": "C#",

|

||||

"csc": null,

|

||||

"csh": null,

|

||||

"csp": null,

|

||||

"css": null,

|

||||

"cterm": null,

|

||||

"ctrlh": null,

|

||||

"cucumber": null,

|

||||

"cuda": null,

|

||||

"cupl": null,

|

||||

"cuplsim": null,

|

||||

"cvs": null,

|

||||

"cvsrc": null,

|

||||

"cweb": null,

|

||||

"cynlib": null,

|

||||

"cynpp": null,

|

||||

"d": null,

|

||||

"datascript": null,

|

||||

"dcd": null,

|

||||

"dcl": null,

|

||||

"debchangelog": null,

|

||||

"debcontrol": null,

|

||||

"debsources": null,

|

||||

"def": null,

|

||||

"denyhosts": null,

|

||||

"desc": null,

|

||||

"desktop": null,

|

||||

"dictconf": null,

|

||||

"dictdconf": null,

|

||||

"diff": null,

|

||||

"dircolors": null,

|

||||

"diva": null,

|

||||

"django": null,

|

||||

"dns": null,

|

||||

"docbk": null,

|

||||

"docbksgml": null,

|

||||

"docbkxml": null,

|

||||

"dosbatch": null,

|

||||

"dosini": null,

|

||||

"dot": null,

|

||||

"doxygen": null,

|

||||

"dracula": null,

|

||||

"dsl": null,

|

||||

"dtd": null,

|

||||

"dtml": null,

|

||||

"dtrace": null,

|

||||

"dylan": null,

|

||||

"dylanintr": null,

|

||||

"dylanlid": null,

|

||||

"ecd": null,

|

||||

"edif": null,

|

||||

"eiffel": null,

|

||||

"elf": null,

|

||||

"elinks": null,

|

||||

"elmfilt": null,

|

||||

"erlang": null,

|

||||

"eruby": null,

|

||||

"esmtprc": null,

|

||||

"esqlc": null,

|

||||

"esterel": null,

|

||||

"eterm": null,

|

||||

"eviews": null,

|

||||

"exim": null,

|

||||

"expect": null,

|

||||

"exports": null,

|

||||

"fan": null,

|

||||

"fasm": null,

|

||||

"fdcc": null,

|

||||

"fetchmail": null,

|

||||

"fgl": null,

|

||||

"flexwiki": null,

|

||||

"focexec": null,

|

||||

"form": null,

|

||||

"forth": null,

|

||||

"fortran": null,

|

||||

"foxpro": null,

|

||||

"framescript": null,

|

||||

"freebasic": null,

|

||||

"fstab": null,

|

||||

"fvwm": null,

|

||||

"fvwm2m4": null,

|

||||

"gdb": null,

|

||||

"gdmo": null,

|

||||

"gedcom": null,

|

||||

"git": null,

|

||||

"gitcommit": null,

|

||||

"gitconfig": null,

|

||||

"gitrebase": null,

|

||||

"gitsendemail": null,

|

||||

"gkrellmrc": null,

|

||||

"gnuplot": null,

|

||||

"gp": null,

|

||||

"gpg": null,

|

||||

"grads": null,

|

||||

"gretl": null,

|

||||

"groff": null,

|

||||

"groovy": null,

|

||||

"group": null,

|

||||

"grub": null,

|

||||

"gsp": null,

|

||||

"gtkrc": null,

|

||||

"haml": "Haml",

|

||||

"hamster": null,

|

||||

"haskell": "Haskell",

|

||||

"haste": null,

|

||||

"hastepreproc": null,

|

||||

"hb": null,

|

||||

"help": null,

|

||||

"hercules": null,

|

||||

"hex": null,

|

||||

"hog": null,

|

||||

"hostconf": null,

|

||||

"html": "HTML",

|

||||

"htmlcheetah": "HTML",

|

||||

"htmldjango": "HTML",

|

||||

"htmlm4": "HTML",

|

||||

"htmlos": null,

|

||||

"ia64": null,

|

||||

"ibasic": null,

|

||||

"icemenu": null,

|

||||

"icon": null,

|

||||

"idl": null,

|

||||

"idlang": null,

|

||||

"indent": null,

|

||||

"inform": null,

|

||||

"initex": null,

|

||||

"initng": null,

|

||||

"inittab": null,

|

||||

"ipfilter": null,

|

||||

"ishd": null,

|

||||

"iss": null,

|

||||

"ist": null,

|

||||

"jal": null,

|

||||

"jam": null,

|

||||

"jargon": null,

|

||||

"java": "Java",

|

||||

"javacc": null,

|

||||

"javascript": "JavaScript",

|

||||

"jess": null,

|

||||

"jgraph": null,

|

||||

"jproperties": null,

|

||||

"jsp": null,

|

||||

"kconfig": null,

|

||||

"kix": null,

|

||||

"kscript": null,

|

||||

"kwt": null,

|

||||

"lace": null,

|

||||

"latte": null,

|

||||

"ld": null,

|

||||

"ldapconf": null,

|

||||

"ldif": null,

|

||||

"lex": null,

|

||||

"lftp": null,

|

||||

"lhaskell": "Haskell",

|

||||

"libao": null,

|

||||

"lifelines": null,

|

||||

"lilo": null,

|

||||

"limits": null,

|

||||

"liquid": null,

|

||||

"lisp": null,

|

||||

"lite": null,

|

||||

"litestep": null,

|

||||

"loginaccess": null,

|

||||

"logindefs": null,

|

||||

"logtalk": null,

|

||||

"lotos": null,

|

||||

"lout": null,

|

||||

"lpc": null,

|

||||

"lprolog": null,

|

||||

"lscript": null,

|

||||

"lsl": null,

|

||||

"lss": null,

|

||||

"lua": null,

|

||||

"lynx": null,

|

||||

"m4": null,

|

||||

"mail": null,

|

||||

"mailaliases": null,

|

||||

"mailcap": null,

|

||||

"make": null,

|

||||

"man": null,

|

||||

"manconf": null,

|

||||

"manual": null,

|

||||

"maple": null,

|

||||

"markdown": "Markdown",

|

||||

"masm": null,

|

||||

"mason": null,

|

||||

"master": null,

|

||||

"matlab": null,

|

||||

"maxima": null,

|

||||

"mel": null,

|

||||

"messages": null,

|

||||

"mf": null,

|

||||

"mgl": null,

|

||||

"mgp": null,

|

||||

"mib": null,

|

||||

"mma": null,

|

||||

"mmix": null,

|

||||

"mmp": null,

|

||||

"modconf": null,

|

||||

"model": null,

|

||||

"modsim3": null,

|

||||

"modula2": null,

|

||||

"modula3": null,

|

||||

"monk": null,

|

||||

"moo": null,

|

||||

"mp": null,

|

||||

"mplayerconf": null,

|

||||

"mrxvtrc": null,

|

||||

"msidl": null,

|

||||

"msmessages": null,

|

||||

"msql": null,

|

||||

"mupad": null,

|

||||

"mush": null,

|

||||

"muttrc": null,

|

||||

"mysql": null,

|

||||

"named": null,

|

||||

"nanorc": null,

|

||||

"nasm": null,

|

||||

"nastran": null,

|

||||

"natural": null,

|

||||

"ncf": null,

|

||||

"netrc": null,

|

||||

"netrw": null,

|

||||

"nosyntax": null,

|

||||

"nqc": null,

|

||||

"nroff": null,

|

||||

"nsis": null,

|

||||

"obj": null,

|

||||

"objc": "Objective-C",

|

||||