mirror of

https://github.com/wakatime/sublime-wakatime.git

synced 2023-08-10 21:13:02 +03:00

Compare commits

239 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 9777bc7788 | |||

| 20b78defa6 | |||

| 8cb1c557d9 | |||

| 20a1965f13 | |||

| 0b802a554e | |||

| 30186c9b2c | |||

| 311a0b5309 | |||

| b7602d89fb | |||

| 305de46e32 | |||

| c574234927 | |||

| a69c50f470 | |||

| f4b40089f3 | |||

| 08394357b7 | |||

| 205d4eb163 | |||

| c4c27e4e9e | |||

| 9167eb2558 | |||

| eaa3bb5180 | |||

| 7755971d11 | |||

| 7634be5446 | |||

| 5e17ad88f6 | |||

| 24d0f65116 | |||

| a326046733 | |||

| 9bab00fd8b | |||

| b4a13a48b9 | |||

| 21601f9688 | |||

| 4c3ec87341 | |||

| b149d7fc87 | |||

| 52e6107c6e | |||

| b340637331 | |||

| 044867449a | |||

| 9e3f438823 | |||

| 887d55c3f3 | |||

| 19d54f3310 | |||

| 514a8762eb | |||

| 957c74d226 | |||

| 7b0432d6ff | |||

| 09754849be | |||

| 25ad48a97a | |||

| 3b2520afa9 | |||

| 77c2041ad3 | |||

| 8af3b53937 | |||

| 5ef2e6954e | |||

| ca94272de5 | |||

| f19a448d95 | |||

| e178765412 | |||

| 6a7de84b9c | |||

| 48810f2977 | |||

| 260eedb31d | |||

| 02e2bfcad2 | |||

| f14ece63f3 | |||

| cb7f786ec8 | |||

| ab8711d0b1 | |||

| 2354be358c | |||

| 443215bd90 | |||

| c64f125dc4 | |||

| 050b14fb53 | |||

| c7efc33463 | |||

| d0ddbed006 | |||

| 3ce8f388ab | |||

| 90731146f9 | |||

| e1ab92be6d | |||

| 8b59e46c64 | |||

| 006341eb72 | |||

| b54e0e13f6 | |||

| 835c7db864 | |||

| 53e8bb04e9 | |||

| 4aa06e3829 | |||

| 297f65733f | |||

| 5ba5e6d21b | |||

| 32eadda81f | |||

| c537044801 | |||

| a97792c23c | |||

| 4223f3575f | |||

| 284cdf3ce4 | |||

| 27afc41bf4 | |||

| 1fdda0d64a | |||

| c90a4863e9 | |||

| 94343e5b07 | |||

| 03acea6e25 | |||

| 77594700bd | |||

| 6681409e98 | |||

| 8f7837269a | |||

| a523b3aa4d | |||

| 6985ce32bb | |||

| 4be40c7720 | |||

| eeb7fd8219 | |||

| 11fbd2d2a6 | |||

| 3cecd0de5d | |||

| c50100e675 | |||

| c1da94bc18 | |||

| 7f9d6ede9d | |||

| 192a5c7aa7 | |||

| 16bbe21be9 | |||

| 5ebaf12a99 | |||

| 1834e8978a | |||

| 22c8ed74bd | |||

| 12bbb4e561 | |||

| c71cb21cc1 | |||

| eb11b991f0 | |||

| 7ea51d09ba | |||

| b07b59e0c8 | |||

| 9d715e95b7 | |||

| 3edaed53aa | |||

| 865b0bcee9 | |||

| d440fe912c | |||

| 627455167f | |||

| aba89d3948 | |||

| 18d87118e1 | |||

| fd91b9e032 | |||

| 16b15773bf | |||

| f0b518862a | |||

| 7ee7de70d5 | |||

| fb479f8e84 | |||

| 7d37193f65 | |||

| 6bd62b95db | |||

| abf4a94a59 | |||

| 9337e3173b | |||

| 57fa4d4d84 | |||

| 9b5c59e677 | |||

| 71ce25a326 | |||

| f2f14207f5 | |||

| ac2ec0e73c | |||

| 040a76b93c | |||

| dab0621b97 | |||

| 675f9ecd69 | |||

| a6f92b9c74 | |||

| bfcc242d7e | |||

| 762027644f | |||

| 3c4ceb95fa | |||

| d6d8bceca0 | |||

| acaad2dc83 | |||

| 23c5801080 | |||

| 05a3bfbb53 | |||

| 8faaa3b0e3 | |||

| 4bcddf2a98 | |||

| b51ae5c2c4 | |||

| 5cd0061653 | |||

| 651c84325e | |||

| 89368529cb | |||

| f1f408284b | |||

| 7053932731 | |||

| b6c4956521 | |||

| 68a2557884 | |||

| c7ee7258fb | |||

| aaff2503fb | |||

| 00a1193bd3 | |||

| 2371daac1b | |||

| 4395db2b2d | |||

| fc8c61fa3f | |||

| aa30110343 | |||

| b671856341 | |||

| b801759cdf | |||

| 919064200b | |||

| 911b5656d7 | |||

| 48bbab33b4 | |||

| 3b2aafe004 | |||

| aa0b2d6d70 | |||

| 1a6f588d94 | |||

| 373ebf933f | |||

| 7fb47228f9 | |||

| 4fca5e1c06 | |||

| cb2d126c47 | |||

| 17404bf848 | |||

| 510eea0a8b | |||

| d16d1ca747 | |||

| 440e33b8b7 | |||

| 307029c37a | |||

| 60c8ea4454 | |||

| e4fe604a93 | |||

| 308187b2ed | |||

| 97f4077675 | |||

| 4960289ed1 | |||

| 82530cef4f | |||

| 08172098e2 | |||

| 56f54fb064 | |||

| 1bea7cde8c | |||

| 038847e665 | |||

| d233494a39 | |||

| 070ad5a023 | |||

| 757a4c6905 | |||

| dd61a4f5f4 | |||

| 69f9bbdc78 | |||

| e1dc4039fd | |||

| 7c07925527 | |||

| ee8c0dfed8 | |||

| ad4df93b04 | |||

| 9a600df969 | |||

| a0abeac3e2 | |||

| 12b8c36c5f | |||

| 7d4d50ee62 | |||

| 520db283cb | |||

| f3179b75d9 | |||

| 1bc8b9b9c7 | |||

| 584d109357 | |||

| 327c0e448b | |||

| 3182a45bbd | |||

| 4cd4a26f91 | |||

| 85856f2c53 | |||

| 8a09559364 | |||

| 5e2e1be779 | |||

| b1d344cb46 | |||

| 7245cbeb58 | |||

| 21395579ea | |||

| 08b64b4ff6 | |||

| 20571ec085 | |||

| e43dcc1c83 | |||

| 4610ff3e0c | |||

| c86d6254e0 | |||

| df331db5cc | |||

| baff0f415d | |||

| 499dc167a5 | |||

| 83f4a29a15 | |||

| 8f02adacf9 | |||

| e631d33944 | |||

| cbd92a69b3 | |||

| b7c047102d | |||

| d0bfd04602 | |||

| 101ab38c70 | |||

| 8632c4ff08 | |||

| 80556d0cbf | |||

| 253728545c | |||

| 49d9b1d7dc | |||

| 8574abe012 | |||

| 6b6f60d8e8 | |||

| 986e592d1e | |||

| 6ec3b171e1 | |||

| bcfb9862af | |||

| 85cf9f4eb5 | |||

| d2a996e845 | |||

| c863bde54a | |||

| e19f85f081 | |||

| 7b854d4041 | |||

| e122f73e6b | |||

| 474942eb6a | |||

| a5f031b046 | |||

| 66fddc07b9 | |||

| e56a07e909 | |||

| 64ea40b3f5 | |||

| 17fd6ef8e1 |

@ -11,5 +11,5 @@ Development Lead

|

||||

Patches and Suggestions

|

||||

-----------------------

|

||||

|

||||

- 3onyc <3onyc@x3tech.com>

|

||||

- userid <xixico@ymail.com>

|

||||

- Jimmy Selgen Nielsen <jimmy.selgen@gmail.com>

|

||||

- Patrik Kernstock <info@pkern.at>

|

||||

6

Default.sublime-commands

Normal file

6

Default.sublime-commands

Normal file

@ -0,0 +1,6 @@

|

||||

[

|

||||

{

|

||||

"caption": "WakaTime: Open Dashboard",

|

||||

"command": "wakatime_dashboard"

|

||||

}

|

||||

]

|

||||

646

HISTORY.rst

646

HISTORY.rst

@ -3,6 +3,652 @@ History

|

||||

-------

|

||||

|

||||

|

||||

7.0.16 (2017-02-20)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v7.0.2.

|

||||

|

||||

|

||||

7.0.15 (2017-02-13)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.2.2.

|

||||

- Upgrade pygments library to v2.2.0 for improved language detection.

|

||||

|

||||

|

||||

7.0.14 (2017-02-08)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.2.1.

|

||||

- Allow boolean or list of regex patterns for hidefilenames config setting.

|

||||

|

||||

|

||||

7.0.13 (2016-11-11)

|

||||

++++++++++++++++++

|

||||

|

||||

- Support old Sublime Text with Python 2.6.

|

||||

- Fix bug that prevented reading default api key from existing config file.

|

||||

|

||||

|

||||

7.0.12 (2016-10-24)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.2.0.

|

||||

- Exit with status code 104 when api key is missing or invalid. Exit with

|

||||

status code 103 when config file missing or invalid.

|

||||

- New WAKATIME_HOME env variable for setting path to config and log files.

|

||||

- Improve debug warning message from unsupported dependency parsers.

|

||||

|

||||

|

||||

7.0.11 (2016-09-23)

|

||||

++++++++++++++++++

|

||||

|

||||

- Handle UnicodeDecodeError when when logging. Related to #68.

|

||||

|

||||

|

||||

7.0.10 (2016-09-22)

|

||||

++++++++++++++++++

|

||||

|

||||

- Handle UnicodeDecodeError when looking for python. Fixes #68.

|

||||

- Upgrade wakatime-cli to v6.0.9.

|

||||

|

||||

|

||||

7.0.9 (2016-09-02)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.8.

|

||||

|

||||

|

||||

7.0.8 (2016-07-21)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to master version to fix debug logging encoding bug.

|

||||

|

||||

|

||||

7.0.7 (2016-07-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.7.

|

||||

- Handle unknown exceptions from requests library by deleting cached session

|

||||

object because it could be from a previous conflicting version.

|

||||

- New hostname setting in config file to set machine hostname. Hostname

|

||||

argument takes priority over hostname from config file.

|

||||

- Prevent logging unrelated exception when logging tracebacks.

|

||||

- Use correct namespace for pygments.lexers.ClassNotFound exception so it is

|

||||

caught when dependency detection not available for a language.

|

||||

|

||||

|

||||

7.0.6 (2016-06-13)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.5.

|

||||

- Upgrade pygments to v2.1.3 for better language coverage.

|

||||

|

||||

|

||||

7.0.5 (2016-06-08)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to master version to fix bug in urllib3 package causing

|

||||

unhandled retry exceptions.

|

||||

- Prevent tracking git branch with detached head.

|

||||

|

||||

|

||||

7.0.4 (2016-05-21)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.3.

|

||||

- Upgrade requests dependency to v2.10.0.

|

||||

- Support for SOCKS proxies.

|

||||

|

||||

|

||||

7.0.3 (2016-05-16)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.2.

|

||||

- Prevent popup on Mac when xcode-tools is not installed.

|

||||

|

||||

|

||||

7.0.2 (2016-04-29)

|

||||

++++++++++++++++++

|

||||

|

||||

- Prevent implicit unicode decoding from string format when logging output

|

||||

from Python version check.

|

||||

|

||||

|

||||

7.0.1 (2016-04-28)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v6.0.1.

|

||||

- Fix bug which prevented plugin from being sent with extra heartbeats.

|

||||

|

||||

|

||||

7.0.0 (2016-04-28)

|

||||

++++++++++++++++++

|

||||

|

||||

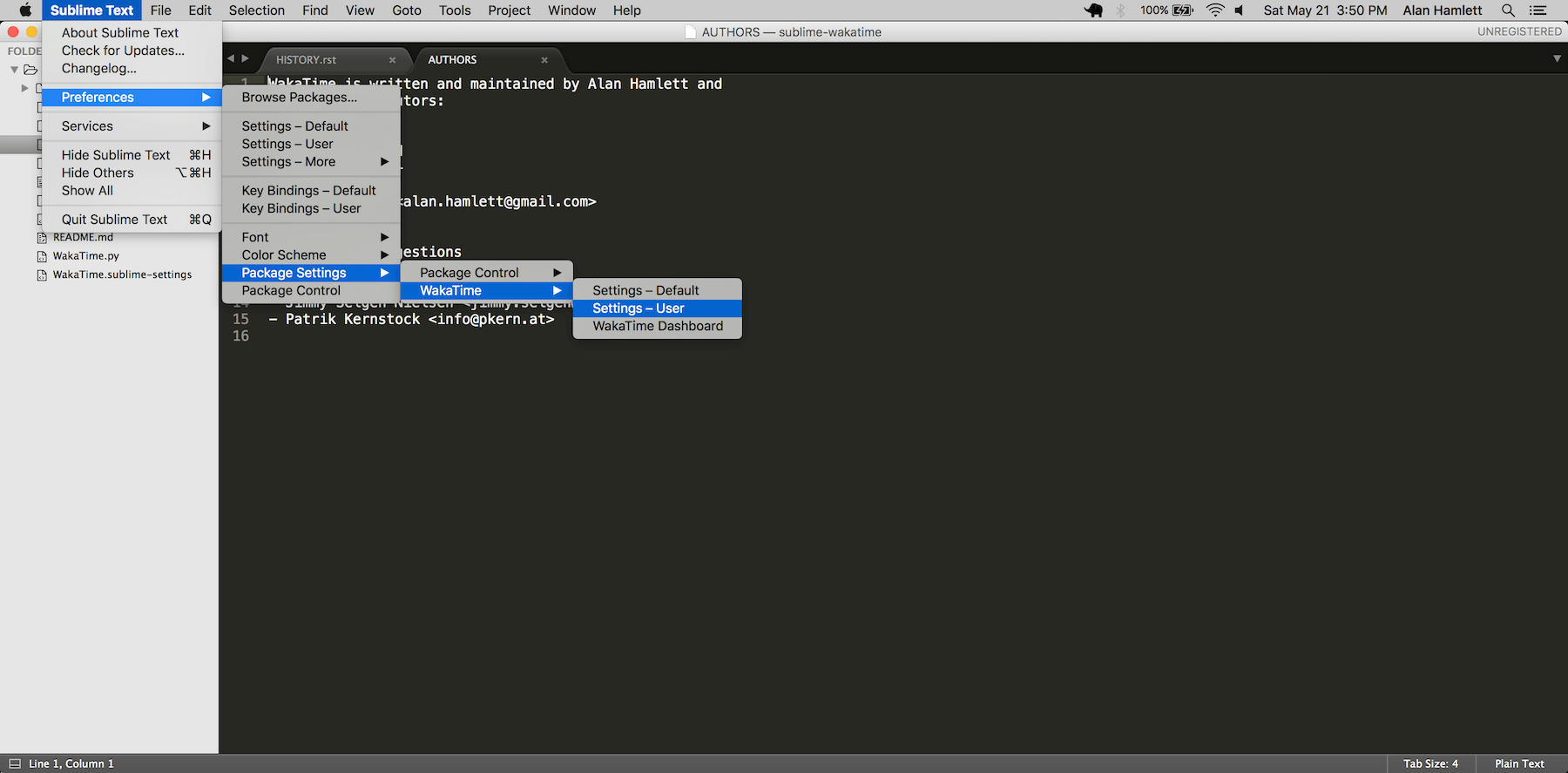

- Queue heartbeats and send to wakatime-cli after 4 seconds.

|

||||

- Nest settings menu under Package Settings.

|

||||

- Upgrade wakatime-cli to v6.0.0.

|

||||

- Increase default network timeout to 60 seconds when sending heartbeats to

|

||||

the api.

|

||||

- New --extra-heartbeats command line argument for sending a JSON array of

|

||||

extra queued heartbeats to STDIN.

|

||||

- Change --entitytype command line argument to --entity-type.

|

||||

- No longer allowing --entity-type of url.

|

||||

- Support passing an alternate language to cli to be used when a language can

|

||||

not be guessed from the code file.

|

||||

|

||||

|

||||

6.0.8 (2016-04-18)

|

||||

++++++++++++++++++

|

||||

|

||||

- Upgrade wakatime-cli to v5.0.0.

|

||||

- Support regex patterns in projectmap config section for renaming projects.

|

||||

- Upgrade pytz to v2016.3.

|

||||

- Upgrade tzlocal to v1.2.2.

|

||||

|

||||

|

||||

6.0.7 (2016-03-11)

|

||||

++++++++++++++++++

|

||||

|

||||

- Fix bug causing RuntimeError when finding Python location

|

||||

|

||||

|

||||

6.0.6 (2016-03-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime-cli to v4.1.13

|

||||

- encode TimeZone as utf-8 before adding to headers

|

||||

- encode X-Machine-Name as utf-8 before adding to headers

|

||||

|

||||

|

||||

6.0.5 (2016-03-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime-cli to v4.1.11

|

||||

- encode machine hostname as Unicode when adding to X-Machine-Name header

|

||||

|

||||

|

||||

6.0.4 (2016-01-15)

|

||||

++++++++++++++++++

|

||||

|

||||

- fix UnicodeDecodeError on ST2 with non-English locale

|

||||

|

||||

|

||||

6.0.3 (2016-01-11)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime-cli core to v4.1.10

|

||||

- accept 201 or 202 response codes as success from api

|

||||

- upgrade requests package to v2.9.1

|

||||

|

||||

|

||||

6.0.2 (2016-01-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime-cli core to v4.1.9

|

||||

- improve C# dependency detection

|

||||

- correctly log exception tracebacks

|

||||

- log all unknown exceptions to wakatime.log file

|

||||

- disable urllib3 SSL warning from every request

|

||||

- detect dependencies from golang files

|

||||

- use api.wakatime.com for sending heartbeats

|

||||

|

||||

|

||||

6.0.1 (2016-01-01)

|

||||

++++++++++++++++++

|

||||

|

||||

- use embedded python if system python is broken, or doesn't output a version number

|

||||

- log output from wakatime-cli in ST console when in debug mode

|

||||

|

||||

|

||||

6.0.0 (2015-12-01)

|

||||

++++++++++++++++++

|

||||

|

||||

- use embeddable Python instead of installing on Windows

|

||||

|

||||

|

||||

5.0.1 (2015-10-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- look for python in system PATH again

|

||||

|

||||

|

||||

5.0.0 (2015-10-02)

|

||||

++++++++++++++++++

|

||||

|

||||

- improve logging with levels and log function

|

||||

- switch registry warnings to debug log level

|

||||

|

||||

|

||||

4.0.20 (2015-10-01)

|

||||

++++++++++++++++++

|

||||

|

||||

- correctly find python binary in non-Windows environments

|

||||

|

||||

|

||||

4.0.19 (2015-10-01)

|

||||

++++++++++++++++++

|

||||

|

||||

- handle case where ST builtin python does not have _winreg or winreg module

|

||||

|

||||

|

||||

4.0.18 (2015-10-01)

|

||||

++++++++++++++++++

|

||||

|

||||

- find python location from windows registry

|

||||

|

||||

|

||||

4.0.17 (2015-10-01)

|

||||

++++++++++++++++++

|

||||

|

||||

- download python in non blocking background thread for Windows machines

|

||||

|

||||

|

||||

4.0.16 (2015-09-29)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime cli to v4.1.8

|

||||

- fix bug in guess_language function

|

||||

- improve dependency detection

|

||||

- default request timeout of 30 seconds

|

||||

- new --timeout command line argument to change request timeout in seconds

|

||||

- allow passing command line arguments using sys.argv

|

||||

- fix entry point for pypi distribution

|

||||

- new --entity and --entitytype command line arguments

|

||||

|

||||

|

||||

4.0.15 (2015-08-28)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime cli to v4.1.3

|

||||

- fix local session caching

|

||||

|

||||

|

||||

4.0.14 (2015-08-25)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime cli to v4.1.2

|

||||

- fix bug in offline caching which prevented heartbeats from being cleaned up

|

||||

|

||||

|

||||

4.0.13 (2015-08-25)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime cli to v4.1.1

|

||||

- send hostname in X-Machine-Name header

|

||||

- catch exceptions from pygments.modeline.get_filetype_from_buffer

|

||||

- upgrade requests package to v2.7.0

|

||||

- handle non-ASCII characters in import path on Windows, won't fix for Python2

|

||||

- upgrade argparse to v1.3.0

|

||||

- move language translations to api server

|

||||

- move extension rules to api server

|

||||

- detect correct header file language based on presence of .cpp or .c files named the same as the .h file

|

||||

|

||||

|

||||

4.0.12 (2015-07-31)

|

||||

++++++++++++++++++

|

||||

|

||||

- correctly use urllib in Python3

|

||||

|

||||

|

||||

4.0.11 (2015-07-31)

|

||||

++++++++++++++++++

|

||||

|

||||

- install python if missing on Windows OS

|

||||

|

||||

|

||||

4.0.10 (2015-07-31)

|

||||

++++++++++++++++++

|

||||

|

||||

- downgrade requests library to v2.6.0

|

||||

|

||||

|

||||

4.0.9 (2015-07-29)

|

||||

++++++++++++++++++

|

||||

|

||||

- catch exceptions from pygments.modeline.get_filetype_from_buffer

|

||||

|

||||

|

||||

4.0.8 (2015-06-23)

|

||||

++++++++++++++++++

|

||||

|

||||

- fix offline logging

|

||||

- limit language detection to known file extensions, unless file contents has a vim modeline

|

||||

- upgrade wakatime cli to v4.0.16

|

||||

|

||||

|

||||

4.0.7 (2015-06-21)

|

||||

++++++++++++++++++

|

||||

|

||||

- allow customizing status bar message in sublime-settings file

|

||||

- guess language using multiple methods, then use most accurate guess

|

||||

- use entity and type for new heartbeats api resource schema

|

||||

- correctly log message from py.warnings module

|

||||

- upgrade wakatime cli to v4.0.15

|

||||

|

||||

|

||||

4.0.6 (2015-05-16)

|

||||

++++++++++++++++++

|

||||

|

||||

- fix bug with auto detecting project name

|

||||

- upgrade wakatime cli to v4.0.13

|

||||

|

||||

|

||||

4.0.5 (2015-05-15)

|

||||

++++++++++++++++++

|

||||

|

||||

- correctly display caller and lineno in log file when debug is true

|

||||

- project passed with --project argument will always be used

|

||||

- new --alternate-project argument

|

||||

- upgrade wakatime cli to v4.0.12

|

||||

|

||||

|

||||

4.0.4 (2015-05-12)

|

||||

++++++++++++++++++

|

||||

|

||||

- reuse SSL connection over multiple processes for improved performance

|

||||

- upgrade wakatime cli to v4.0.11

|

||||

|

||||

|

||||

4.0.3 (2015-05-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- send cursorpos to wakatime cli

|

||||

- upgrade wakatime cli to v4.0.10

|

||||

|

||||

|

||||

4.0.2 (2015-05-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- only send heartbeats for the currently active buffer

|

||||

|

||||

|

||||

4.0.1 (2015-05-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- ignore git temporary files

|

||||

- don't send two write heartbeats within 2 seconds of eachother

|

||||

|

||||

|

||||

4.0.0 (2015-04-12)

|

||||

++++++++++++++++++

|

||||

|

||||

- listen for selection modified instead of buffer activated for better performance

|

||||

|

||||

|

||||

3.0.19 (2015-04-07)

|

||||

+++++++++++++++++++

|

||||

|

||||

- fix bug in project detection when folder not found

|

||||

|

||||

|

||||

3.0.18 (2015-04-04)

|

||||

+++++++++++++++++++

|

||||

|

||||

- upgrade wakatime cli to v4.0.8

|

||||

- added api_url config option to .wakatime.cfg file

|

||||

|

||||

|

||||

3.0.17 (2015-04-02)

|

||||

+++++++++++++++++++

|

||||

|

||||

- use open folder as current project when not using revision control

|

||||

|

||||

|

||||

3.0.16 (2015-04-02)

|

||||

+++++++++++++++++++

|

||||

|

||||

- copy list when obfuscating api key so original command is not modified

|

||||

|

||||

|

||||

3.0.15 (2015-04-01)

|

||||

+++++++++++++++++++

|

||||

|

||||

- obfuscate api key when logging to Sublime Text Console in debug mode

|

||||

|

||||

|

||||

3.0.14 (2015-03-31)

|

||||

+++++++++++++++++++

|

||||

|

||||

- always use external python binary because ST builtin python does not support checking SSL certs

|

||||

- upgrade wakatime cli to v4.0.6

|

||||

|

||||

|

||||

3.0.13 (2015-03-23)

|

||||

+++++++++++++++++++

|

||||

|

||||

- correctly check for SSL support in ST built-in python

|

||||

- fix status bar message

|

||||

|

||||

|

||||

3.0.12 (2015-03-23)

|

||||

+++++++++++++++++++

|

||||

|

||||

- always use unicode function from compat module when encoding log messages

|

||||

|

||||

|

||||

3.0.11 (2015-03-23)

|

||||

+++++++++++++++++++

|

||||

|

||||

- upgrade simplejson package to v3.6.5

|

||||

|

||||

|

||||

3.0.10 (2015-03-22)

|

||||

+++++++++++++++++++

|

||||

|

||||

- ability to disable status bar message from WakaTime.sublime-settings file

|

||||

|

||||

|

||||

3.0.9 (2015-03-20)

|

||||

++++++++++++++++++

|

||||

|

||||

- status bar message showing when WakaTime plugin is enabled

|

||||

- moved some logic into thread to help prevent slow plugin warning message

|

||||

|

||||

|

||||

3.0.8 (2015-03-09)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade wakatime cli to v4.0.4

|

||||

- use requests library instead of urllib2, so api SSL cert is verified

|

||||

- new --notfile argument to support logging time without a real file

|

||||

- new --proxy argument for https proxy support

|

||||

- new options for excluding and including directories

|

||||

|

||||

|

||||

3.0.7 (2015-02-05)

|

||||

++++++++++++++++++

|

||||

|

||||

- handle errors encountered when looking for .sublime-project file

|

||||

|

||||

|

||||

3.0.6 (2015-01-13)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v3.0.5

|

||||

- ignore errors from malformed markup (too many closing tags)

|

||||

|

||||

|

||||

3.0.5 (2015-01-06)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v3.0.4

|

||||

- remove unused dependency, which is missing in some python environments

|

||||

|

||||

|

||||

3.0.4 (2014-12-26)

|

||||

++++++++++++++++++

|

||||

|

||||

- fix bug causing plugin to not work in Sublime Text 2

|

||||

|

||||

|

||||

3.0.3 (2014-12-25)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v3.0.3

|

||||

- detect JavaScript frameworks from script tags in Html template files

|

||||

|

||||

|

||||

3.0.2 (2014-12-25)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v3.0.2

|

||||

- detect frameworks from JavaScript and JSON files

|

||||

|

||||

|

||||

3.0.1 (2014-12-23)

|

||||

++++++++++++++++++

|

||||

|

||||

- parse use namespaces from php files

|

||||

|

||||

|

||||

3.0.0 (2014-12-23)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v3.0.1

|

||||

- detect libraries and frameworks for C++, Java, .NET, PHP, and Python files

|

||||

|

||||

|

||||

2.0.21 (2014-12-22)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.1.11

|

||||

- fix bug in offline logging when no response from api

|

||||

|

||||

|

||||

2.0.20 (2014-12-05)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.1.9

|

||||

- fix bug preventing offline heartbeats from being purged after uploaded

|

||||

|

||||

|

||||

2.0.19 (2014-12-04)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.1.8

|

||||

- fix UnicodeDecodeError when building user agent string

|

||||

- handle case where response is None

|

||||

|

||||

|

||||

2.0.18 (2014-11-30)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.1.7

|

||||

- upgrade pygments to v2.0.1

|

||||

- always log an error when api key is incorrect

|

||||

|

||||

|

||||

2.0.17 (2014-11-18)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.1.6

|

||||

- fix list index error when detecting subversion project

|

||||

|

||||

|

||||

2.0.16 (2014-11-12)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.1.4

|

||||

- when Python was not compiled with https support, log an error to the log file

|

||||

|

||||

|

||||

2.0.15 (2014-11-10)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.1.3

|

||||

- correctly detect branch for subversion projects

|

||||

|

||||

|

||||

2.0.14 (2014-10-14)

|

||||

++++++++++++++++++

|

||||

|

||||

- popup error message if Python binary not found

|

||||

|

||||

|

||||

2.0.13 (2014-10-07)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.1.2

|

||||

- still log heartbeat when something goes wrong while reading num lines in file

|

||||

|

||||

|

||||

2.0.12 (2014-09-30)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.1.1

|

||||

- fix bug where binary file opened as utf-8

|

||||

|

||||

|

||||

2.0.11 (2014-09-30)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.1.0

|

||||

- python3 compatibility changes

|

||||

|

||||

|

||||

2.0.10 (2014-08-29)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.0.8

|

||||

- supress output from svn command

|

||||

|

||||

|

||||

2.0.9 (2014-08-27)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.0.7

|

||||

- fix support for subversion projects on Mac OS X

|

||||

|

||||

|

||||

2.0.8 (2014-08-07)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.0.6

|

||||

- fix unicode bug by encoding json POST data

|

||||

|

||||

|

||||

2.0.7 (2014-07-25)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.0.5

|

||||

- option in .wakatime.cfg to obfuscate file names

|

||||

|

||||

|

||||

2.0.6 (2014-07-25)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.0.4

|

||||

- use unique logger namespace to prevent collisions in shared plugin environments

|

||||

|

||||

|

||||

2.0.5 (2014-06-18)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.0.3

|

||||

- use project name from sublime-project file when no revision control project found

|

||||

|

||||

|

||||

2.0.4 (2014-06-09)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.0.2

|

||||

- disable offline logging when Python not compiled with sqlite3 module

|

||||

|

||||

|

||||

2.0.3 (2014-05-26)

|

||||

++++++++++++++++++

|

||||

|

||||

- upgrade external wakatime package to v2.0.1

|

||||

- fix bug in queue preventing completed tasks from being purged

|

||||

|

||||

|

||||

2.0.2 (2014-05-26)

|

||||

++++++++++++++++++

|

||||

|

||||

- disable syncing offline time until bug fixed

|

||||

|

||||

|

||||

2.0.1 (2014-05-25)

|

||||

++++++++++++++++++

|

||||

|

||||

|

||||

6

LICENSE

6

LICENSE

@ -1,4 +1,6 @@

|

||||

Copyright (c) 2014 Alan Hamlett https://wakatime.com

|

||||

BSD 3-Clause License

|

||||

|

||||

Copyright (c) 2014 by the respective authors (see AUTHORS file).

|

||||

All rights reserved.

|

||||

|

||||

Redistribution and use in source and binary forms, with or without

|

||||

@ -12,7 +14,7 @@ modification, are permitted provided that the following conditions are met:

|

||||

in the documentation and/or other materials provided

|

||||

with the distribution.

|

||||

|

||||

* Neither the names of Wakatime or WakaTime, nor the names of its

|

||||

* Neither the names of WakaTime, nor the names of its

|

||||

contributors may be used to endorse or promote products derived

|

||||

from this software without specific prior written permission.

|

||||

|

||||

|

||||

@ -6,24 +6,37 @@

|

||||

"children":

|

||||

[

|

||||

{

|

||||

"caption": "WakaTime",

|

||||

"mnemonic": "W",

|

||||

"id": "wakatime-settings",

|

||||

"caption": "Package Settings",

|

||||

"mnemonic": "P",

|

||||

"id": "package-settings",

|

||||

"children":

|

||||

[

|

||||

{

|

||||

"command": "open_file", "args":

|

||||

{

|

||||

"file": "${packages}/WakaTime/WakaTime.sublime-settings"

|

||||

},

|

||||

"caption": "Settings – Default"

|

||||

},

|

||||

{

|

||||

"command": "open_file", "args":

|

||||

{

|

||||

"file": "${packages}/User/WakaTime.sublime-settings"

|

||||

},

|

||||

"caption": "Settings – User"

|

||||

"caption": "WakaTime",

|

||||

"mnemonic": "W",

|

||||

"id": "wakatime-settings",

|

||||

"children":

|

||||

[

|

||||

{

|

||||

"command": "open_file", "args":

|

||||

{

|

||||

"file": "${packages}/WakaTime/WakaTime.sublime-settings"

|

||||

},

|

||||

"caption": "Settings – Default"

|

||||

},

|

||||

{

|

||||

"command": "open_file", "args":

|

||||

{

|

||||

"file": "${packages}/User/WakaTime.sublime-settings"

|

||||

},

|

||||

"caption": "Settings – User"

|

||||

},

|

||||

{

|

||||

"command": "wakatime_dashboard",

|

||||

"args": {},

|

||||

"caption": "WakaTime Dashboard"

|

||||

}

|

||||

]

|

||||

}

|

||||

]

|

||||

}

|

||||

|

||||

45

README.md

45

README.md

@ -1,33 +1,56 @@

|

||||

sublime-wakatime

|

||||

================

|

||||

|

||||

Fully automatic time tracking for Sublime Text 2 & 3.

|

||||

Metrics, insights, and time tracking automatically generated from your programming activity.

|

||||

|

||||

|

||||

Installation

|

||||

------------

|

||||

|

||||

Heads Up! For Sublime Text 2 on Windows & Linux, WakaTime depends on [Python](http://www.python.org/getit/) being installed to work correctly.

|

||||

1. Install [Package Control](https://packagecontrol.io/installation).

|

||||

|

||||

1. Get an api key from: https://wakatime.com/#apikey

|

||||

2. Using [Package Control](https://packagecontrol.io/docs/usage):

|

||||

|

||||

2. Using [Sublime Package Control](http://wbond.net/sublime_packages/package_control):

|

||||

|

||||

a) Press `ctrl+shift+p`(Windows, Linux) or `cmd+shift+p`(OS X).

|

||||

a) Inside Sublime, press `ctrl+shift+p`(Windows, Linux) or `cmd+shift+p`(OS X).

|

||||

|

||||

b) Type `install`, then press `enter` with `Package Control: Install Package` selected.

|

||||

|

||||

c) Type `wakatime`, then press `enter` with the `WakaTime` plugin selected.

|

||||

|

||||

3. You will see a prompt at the bottom asking for your [api key](https://wakatime.com/#apikey). Enter your api key, then press `enter`.

|

||||

3. Enter your [api key](https://wakatime.com/settings#apikey), then press `enter`.

|

||||

|

||||

4. Use Sublime and your time will automatically be tracked for you.

|

||||

4. Use Sublime and your time will be tracked for you automatically.

|

||||

|

||||

5. Visit https://wakatime.com to see your logged time.

|

||||

5. Visit https://wakatime.com/dashboard to see your logged time.

|

||||

|

||||

6. Consider installing [BIND9](https://help.ubuntu.com/community/BIND9ServerHowto#Caching_Server_configuration) to cache your repeated DNS requests: `sudo apt-get install bind9`

|

||||

|

||||

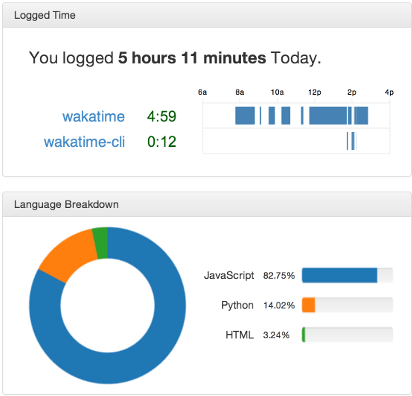

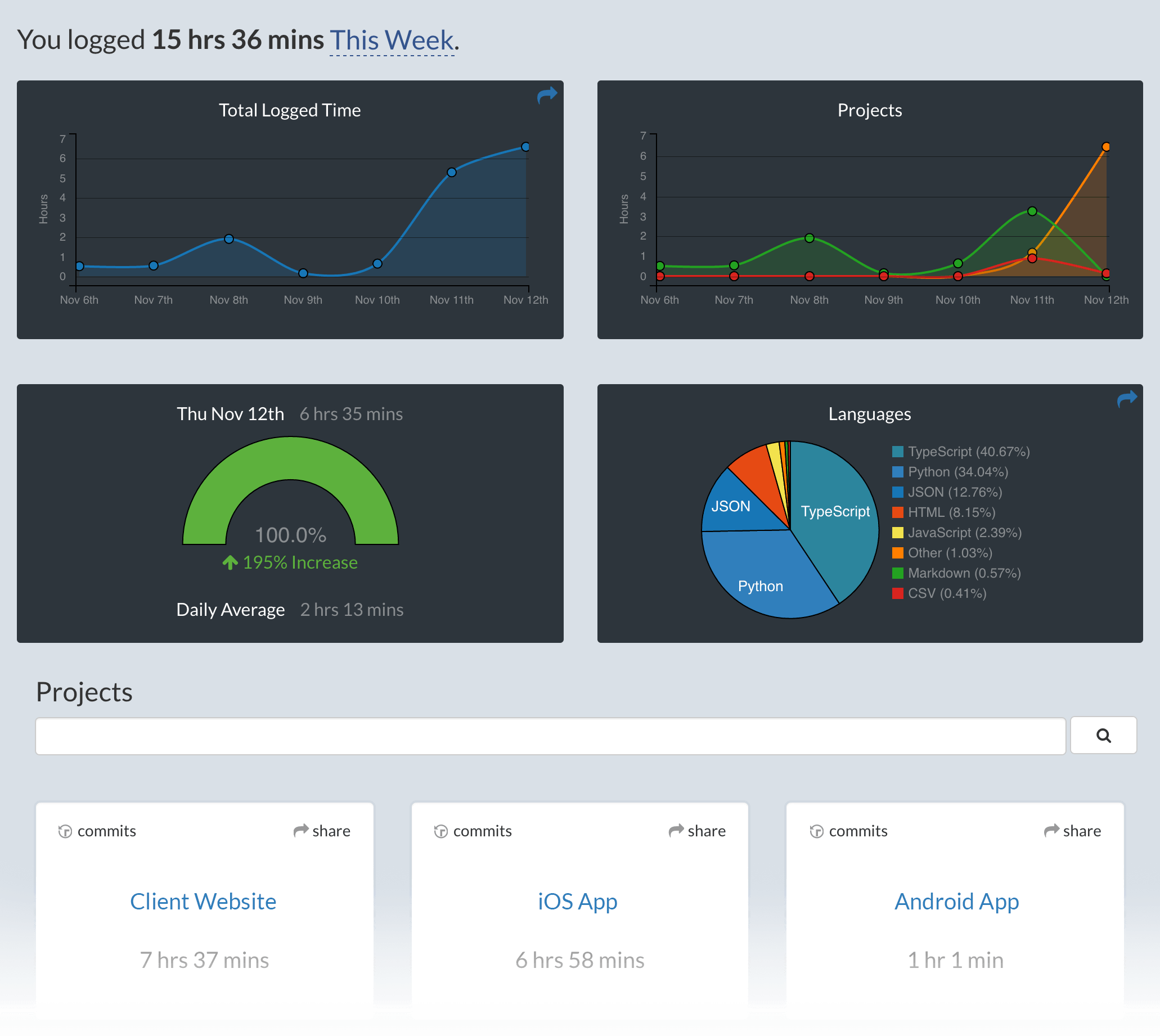

Screen Shots

|

||||

------------

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

Unresponsive Plugin Warning

|

||||

---------------------------

|

||||

|

||||

In Sublime Text 2, if you get a warning message:

|

||||

|

||||

A plugin (WakaTime) may be making Sublime Text unresponsive by taking too long (0.017332s) in its on_modified callback.

|

||||

|

||||

To fix this, go to `Preferences > Settings - User` then add the following setting:

|

||||

|

||||

`"detect_slow_plugins": false`

|

||||

|

||||

|

||||

Troubleshooting

|

||||

---------------

|

||||

|

||||

First, turn on debug mode in your `WakaTime.sublime-settings` file.

|

||||

|

||||

|

||||

|

||||

Add the line: `"debug": true`

|

||||

|

||||

Then, open your Sublime Console with `View -> Show Console` to see the plugin executing the wakatime cli process when sending a heartbeat. Also, tail your `$HOME/.wakatime.log` file to debug wakatime cli problems.

|

||||

|

||||

For more general troubleshooting information, see [wakatime/wakatime#troubleshooting](https://github.com/wakatime/wakatime#troubleshooting).

|

||||

|

||||

679

WakaTime.py

679

WakaTime.py

@ -6,166 +6,641 @@ License: BSD, see LICENSE for more details.

|

||||

Website: https://wakatime.com/

|

||||

==========================================================="""

|

||||

|

||||

__version__ = '2.0.1'

|

||||

|

||||

__version__ = '7.0.16'

|

||||

|

||||

|

||||

import sublime

|

||||

import sublime_plugin

|

||||

|

||||

import glob

|

||||

import contextlib

|

||||

import json

|

||||

import os

|

||||

import platform

|

||||

import re

|

||||

import sys

|

||||

import time

|

||||

import threading

|

||||

import uuid

|

||||

from os.path import expanduser, dirname, realpath, isfile, join, exists

|

||||

import traceback

|

||||

import urllib

|

||||

import webbrowser

|

||||

from datetime import datetime

|

||||

from subprocess import Popen, STDOUT, PIPE

|

||||

from zipfile import ZipFile

|

||||

try:

|

||||

import _winreg as winreg # py2

|

||||

except ImportError:

|

||||

try:

|

||||

import winreg # py3

|

||||

except ImportError:

|

||||

winreg = None

|

||||

try:

|

||||

import Queue as queue # py2

|

||||

except ImportError:

|

||||

import queue # py3

|

||||

|

||||

|

||||

is_py2 = (sys.version_info[0] == 2)

|

||||

is_py3 = (sys.version_info[0] == 3)

|

||||

|

||||

if is_py2:

|

||||

def u(text):

|

||||

if text is None:

|

||||

return None

|

||||

if isinstance(text, unicode):

|

||||

return text

|

||||

try:

|

||||

return text.decode('utf-8')

|

||||

except:

|

||||

try:

|

||||

return text.decode(sys.getdefaultencoding())

|

||||

except:

|

||||

try:

|

||||

return unicode(text)

|

||||

except:

|

||||

try:

|

||||

return text.decode('utf-8', 'replace')

|

||||

except:

|

||||

try:

|

||||

return unicode(str(text))

|

||||

except:

|

||||

return unicode('')

|

||||

|

||||

elif is_py3:

|

||||

def u(text):

|

||||

if text is None:

|

||||

return None

|

||||

if isinstance(text, bytes):

|

||||

try:

|

||||

return text.decode('utf-8')

|

||||

except:

|

||||

try:

|

||||

return text.decode(sys.getdefaultencoding())

|

||||

except:

|

||||

pass

|

||||

try:

|

||||

return str(text)

|

||||

except:

|

||||

return text.decode('utf-8', 'replace')

|

||||

|

||||

else:

|

||||

raise Exception('Unsupported Python version: {0}.{1}.{2}'.format(

|

||||

sys.version_info[0],

|

||||

sys.version_info[1],

|

||||

sys.version_info[2],

|

||||

))

|

||||

|

||||

|

||||

# globals

|

||||

ACTION_FREQUENCY = 2

|

||||

HEARTBEAT_FREQUENCY = 2

|

||||

ST_VERSION = int(sublime.version())

|

||||

PLUGIN_DIR = dirname(realpath(__file__))

|

||||

API_CLIENT = '%s/packages/wakatime/wakatime-cli.py' % PLUGIN_DIR

|

||||

PLUGIN_DIR = os.path.dirname(os.path.realpath(__file__))

|

||||

API_CLIENT = os.path.join(PLUGIN_DIR, 'packages', 'wakatime', 'cli.py')

|

||||

SETTINGS_FILE = 'WakaTime.sublime-settings'

|

||||

SETTINGS = {}

|

||||

LAST_ACTION = {

|

||||

LAST_HEARTBEAT = {

|

||||

'time': 0,

|

||||

'file': None,

|

||||

'is_write': False,

|

||||

}

|

||||

HAS_SSL = False

|

||||

LOCK = threading.RLock()

|

||||

PYTHON_LOCATION = None

|

||||

HEARTBEATS = queue.Queue()

|

||||

|

||||

# check if we have SSL support

|

||||

|

||||

# Log Levels

|

||||

DEBUG = 'DEBUG'

|

||||

INFO = 'INFO'

|

||||

WARNING = 'WARNING'

|

||||

ERROR = 'ERROR'

|

||||

|

||||

|

||||

# add wakatime package to path

|

||||

sys.path.insert(0, os.path.join(PLUGIN_DIR, 'packages'))

|

||||

try:

|

||||

import ssl

|

||||

import socket

|

||||

socket.ssl

|

||||

HAS_SSL = True

|

||||

except (ImportError, AttributeError):

|

||||

from subprocess import Popen

|

||||

from wakatime.main import parseConfigFile

|

||||

except ImportError:

|

||||

pass

|

||||

|

||||

if HAS_SSL:

|

||||

# import wakatime package

|

||||

sys.path.insert(0, join(PLUGIN_DIR, 'packages', 'wakatime'))

|

||||

import wakatime

|

||||

|

||||

def set_timeout(callback, seconds):

|

||||

"""Runs the callback after the given seconds delay.

|

||||

|

||||

If this is Sublime Text 3, runs the callback on an alternate thread. If this

|

||||

is Sublime Text 2, runs the callback in the main thread.

|

||||

"""

|

||||

|

||||

milliseconds = int(seconds * 1000)

|

||||

try:

|

||||

sublime.set_timeout_async(callback, milliseconds)

|

||||

except AttributeError:

|

||||

sublime.set_timeout(callback, milliseconds)

|

||||

|

||||

|

||||

def log(lvl, message, *args, **kwargs):

|

||||

try:

|

||||

if lvl == DEBUG and not SETTINGS.get('debug'):

|

||||

return

|

||||

msg = message

|

||||

if len(args) > 0:

|

||||

msg = message.format(*args)

|

||||

elif len(kwargs) > 0:

|

||||

msg = message.format(**kwargs)

|

||||

try:

|

||||

print('[WakaTime] [{lvl}] {msg}'.format(lvl=lvl, msg=msg))

|

||||

except UnicodeDecodeError:

|

||||

print(u('[WakaTime] [{lvl}] {msg}').format(lvl=lvl, msg=u(msg)))

|

||||

except RuntimeError:

|

||||

set_timeout(lambda: log(lvl, message, *args, **kwargs), 0)

|

||||

|

||||

|

||||

def resources_folder():

|

||||

if platform.system() == 'Windows':

|

||||

return os.path.join(os.getenv('APPDATA'), 'WakaTime')

|

||||

else:

|

||||

return os.path.join(os.path.expanduser('~'), '.wakatime')

|

||||

|

||||

|

||||

def update_status_bar(status):

|

||||

"""Updates the status bar."""

|

||||

|

||||

try:

|

||||

if SETTINGS.get('status_bar_message'):

|

||||

msg = datetime.now().strftime(SETTINGS.get('status_bar_message_fmt'))

|

||||

if '{status}' in msg:

|

||||

msg = msg.format(status=status)

|

||||

|

||||

active_window = sublime.active_window()

|

||||

if active_window:

|

||||

for view in active_window.views():

|

||||

view.set_status('wakatime', msg)

|

||||

except RuntimeError:

|

||||

set_timeout(lambda: update_status_bar(status), 0)

|

||||

|

||||

|

||||

def create_config_file():

|

||||

"""Creates the .wakatime.cfg INI file in $HOME directory, if it does

|

||||

not already exist.

|

||||

"""

|

||||

configFile = os.path.join(os.path.expanduser('~'), '.wakatime.cfg')

|

||||

try:

|

||||

with open(configFile) as fh:

|

||||

pass

|

||||

except IOError:

|

||||

try:

|

||||

with open(configFile, 'w') as fh:

|

||||

fh.write("[settings]\n")

|

||||

fh.write("debug = false\n")

|

||||

fh.write("hidefilenames = false\n")

|

||||

except IOError:

|

||||

pass

|

||||

|

||||

|

||||

def prompt_api_key():

|

||||

global SETTINGS

|

||||

if not SETTINGS.get('api_key'):

|

||||

|

||||

create_config_file()

|

||||

|

||||

default_key = ''

|

||||

try:

|

||||

configs = parseConfigFile()

|

||||

if configs is not None:

|

||||

if configs.has_option('settings', 'api_key'):

|

||||

default_key = configs.get('settings', 'api_key')

|

||||

except:

|

||||

pass

|

||||

|

||||

if SETTINGS.get('api_key'):

|

||||

return True

|

||||

else:

|

||||

def got_key(text):

|

||||

if text:

|

||||

SETTINGS.set('api_key', str(text))

|

||||

sublime.save_settings(SETTINGS_FILE)

|

||||

window = sublime.active_window()

|

||||

if window:

|

||||

window.show_input_panel('[WakaTime] Enter your wakatime.com api key:', '', got_key, None, None)

|

||||

window.show_input_panel('[WakaTime] Enter your wakatime.com api key:', default_key, got_key, None, None)

|

||||

return True

|

||||

else:

|

||||

print('[WakaTime] Error: Could not prompt for api key because no window found.')

|

||||

log(ERROR, 'Could not prompt for api key because no window found.')

|

||||

return False

|

||||

|

||||

|

||||

def python_binary():

|

||||

if platform.system() == 'Windows':

|

||||

try:

|

||||

Popen(['pythonw', '--version'])

|

||||

return 'pythonw'

|

||||

except:

|

||||

for path in glob.iglob('/python*'):

|

||||

if exists(realpath(join(path, 'pythonw.exe'))):

|

||||

return realpath(join(path, 'pythonw'))

|

||||

return None

|

||||

return 'python'

|

||||

if PYTHON_LOCATION is not None:

|

||||

return PYTHON_LOCATION

|

||||

|

||||

# look for python in PATH and common install locations

|

||||

paths = [

|

||||

os.path.join(resources_folder(), 'python'),

|

||||

None,

|

||||

'/',

|

||||

'/usr/local/bin/',

|

||||

'/usr/bin/',

|

||||

]

|

||||

for path in paths:

|

||||

path = find_python_in_folder(path)

|

||||

if path is not None:

|

||||

set_python_binary_location(path)

|

||||

return path

|

||||

|

||||

# look for python in windows registry

|

||||

path = find_python_from_registry(r'SOFTWARE\Python\PythonCore')

|

||||

if path is not None:

|

||||

set_python_binary_location(path)

|

||||

return path

|

||||

path = find_python_from_registry(r'SOFTWARE\Wow6432Node\Python\PythonCore')

|

||||

if path is not None:

|

||||

set_python_binary_location(path)

|

||||

return path

|

||||

|

||||

return None

|

||||

|

||||

|

||||

def enough_time_passed(now, last_time):

|

||||

if now - last_time > ACTION_FREQUENCY * 60:

|

||||

def set_python_binary_location(path):

|

||||

global PYTHON_LOCATION

|

||||

PYTHON_LOCATION = path

|

||||

log(DEBUG, 'Found Python at: {0}'.format(path))

|

||||

|

||||

|

||||

def find_python_from_registry(location, reg=None):

|

||||

if platform.system() != 'Windows' or winreg is None:

|

||||

return None

|

||||

|

||||

if reg is None:

|

||||

path = find_python_from_registry(location, reg=winreg.HKEY_CURRENT_USER)

|

||||

if path is None:

|

||||

path = find_python_from_registry(location, reg=winreg.HKEY_LOCAL_MACHINE)

|

||||

return path

|

||||

|

||||

val = None

|

||||

sub_key = 'InstallPath'

|

||||

compiled = re.compile(r'^\d+\.\d+$')

|

||||

|

||||

try:

|

||||

with winreg.OpenKey(reg, location) as handle:

|

||||

versions = []

|

||||

try:

|

||||

for index in range(1024):

|

||||

version = winreg.EnumKey(handle, index)

|

||||

try:

|

||||

if compiled.search(version):

|

||||

versions.append(version)

|

||||

except re.error:

|

||||

pass

|

||||

except EnvironmentError:

|

||||

pass

|

||||

versions.sort(reverse=True)

|

||||

for version in versions:

|

||||

try:

|

||||

path = winreg.QueryValue(handle, version + '\\' + sub_key)

|

||||

if path is not None:

|

||||

path = find_python_in_folder(path)

|

||||

if path is not None:

|

||||

log(DEBUG, 'Found python from {reg}\\{key}\\{version}\\{sub_key}.'.format(

|

||||

reg=reg,

|

||||

key=location,

|

||||

version=version,

|

||||

sub_key=sub_key,

|

||||

))

|

||||

return path

|

||||

except WindowsError:

|

||||

log(DEBUG, 'Could not read registry value "{reg}\\{key}\\{version}\\{sub_key}".'.format(

|

||||

reg=reg,

|

||||

key=location,

|

||||

version=version,

|

||||

sub_key=sub_key,

|

||||

))

|

||||

except WindowsError:

|

||||

log(DEBUG, 'Could not read registry value "{reg}\\{key}".'.format(

|

||||

reg=reg,

|

||||

key=location,

|

||||

))

|

||||

except:

|

||||

log(ERROR, 'Could not read registry value "{reg}\\{key}":\n{exc}'.format(

|

||||

reg=reg,

|

||||

key=location,

|

||||

exc=traceback.format_exc(),

|

||||

))

|

||||

|

||||

return val

|

||||

|

||||

|

||||

def find_python_in_folder(folder, headless=True):

|

||||

pattern = re.compile(r'\d+\.\d+')

|

||||

|

||||

path = 'python'

|

||||

if folder is not None:

|

||||

path = os.path.realpath(os.path.join(folder, 'python'))

|

||||

if headless:

|

||||

path = u(path) + u('w')

|

||||

log(DEBUG, u('Looking for Python at: {0}').format(u(path)))

|

||||

try:

|

||||

process = Popen([path, '--version'], stdout=PIPE, stderr=STDOUT)

|

||||

output, err = process.communicate()

|

||||

output = u(output).strip()

|

||||

retcode = process.poll()

|

||||

log(DEBUG, u('Python Version Output: {0}').format(output))

|

||||

if not retcode and pattern.search(output):

|

||||

return path

|

||||

except:

|

||||

log(DEBUG, u(sys.exc_info()[1]))

|

||||

|

||||

if headless:

|

||||

path = find_python_in_folder(folder, headless=False)

|

||||

if path is not None:

|

||||

return path

|

||||

|

||||

return None

|

||||

|

||||

|

||||

def obfuscate_apikey(command_list):

|

||||

cmd = list(command_list)

|

||||

apikey_index = None

|

||||

for num in range(len(cmd)):

|

||||

if cmd[num] == '--key':

|

||||

apikey_index = num + 1

|

||||

break

|

||||

if apikey_index is not None and apikey_index < len(cmd):

|

||||

cmd[apikey_index] = 'XXXXXXXX-XXXX-XXXX-XXXX-XXXXXXXX' + cmd[apikey_index][-4:]

|

||||

return cmd

|

||||

|

||||

|

||||

def enough_time_passed(now, is_write):

|

||||

if now - LAST_HEARTBEAT['time'] > HEARTBEAT_FREQUENCY * 60:

|

||||

return True

|

||||

if is_write and now - LAST_HEARTBEAT['time'] > 2:

|

||||

return True

|

||||

return False

|

||||

|

||||

|

||||

def handle_action(view, is_write=False):

|

||||

global LOCK, LAST_ACTION

|

||||

with LOCK:

|

||||

target_file = view.file_name()

|

||||

if target_file:

|

||||

thread = SendActionThread(target_file, is_write=is_write)

|

||||

thread.start()

|

||||

LAST_ACTION = {

|

||||

'file': target_file,

|

||||

'time': time.time(),

|

||||

'is_write': is_write,

|

||||

}

|

||||

def find_folder_containing_file(folders, current_file):

|

||||

"""Returns absolute path to folder containing the file.

|

||||

"""

|

||||

|

||||

parent_folder = None

|

||||

|

||||

current_folder = current_file

|

||||

while True:

|

||||

for folder in folders:

|

||||

if os.path.realpath(os.path.dirname(current_folder)) == os.path.realpath(folder):

|

||||

parent_folder = folder

|

||||

break

|

||||

if parent_folder is not None:

|

||||

break

|

||||

if not current_folder or os.path.dirname(current_folder) == current_folder:

|

||||

break

|

||||

current_folder = os.path.dirname(current_folder)

|

||||

|

||||

return parent_folder

|

||||

|

||||

|

||||

class SendActionThread(threading.Thread):

|

||||

def find_project_from_folders(folders, current_file):

|

||||

"""Find project name from open folders.

|

||||

"""

|

||||

|

||||

def __init__(self, target_file, is_write=False, force=False):

|

||||

folder = find_folder_containing_file(folders, current_file)

|

||||

return os.path.basename(folder) if folder else None

|

||||

|

||||

|

||||

def is_view_active(view):

|

||||

if view:

|

||||

active_window = sublime.active_window()

|

||||

if active_window:

|

||||

active_view = active_window.active_view()

|

||||

if active_view:

|

||||

return active_view.buffer_id() == view.buffer_id()

|

||||

return False

|

||||

|

||||

|

||||

def handle_activity(view, is_write=False):

|

||||

window = view.window()

|

||||

if window is not None:

|

||||

entity = view.file_name()

|

||||

if entity:

|

||||

timestamp = time.time()

|

||||

last_file = LAST_HEARTBEAT['file']

|

||||

if entity != last_file or enough_time_passed(timestamp, is_write):

|

||||

project = window.project_data() if hasattr(window, 'project_data') else None

|

||||

folders = window.folders()

|

||||

append_heartbeat(entity, timestamp, is_write, view, project, folders)

|

||||

|

||||

|

||||

def append_heartbeat(entity, timestamp, is_write, view, project, folders):

|

||||

global LAST_HEARTBEAT

|

||||

|

||||

# add this heartbeat to queue

|

||||

heartbeat = {

|

||||

'entity': entity,

|

||||

'timestamp': timestamp,

|

||||

'is_write': is_write,

|

||||

'cursorpos': view.sel()[0].begin() if view.sel() else None,

|

||||

'project': project,

|

||||

'folders': folders,

|

||||

}

|

||||

HEARTBEATS.put_nowait(heartbeat)

|

||||

|

||||

# make this heartbeat the LAST_HEARTBEAT

|

||||

LAST_HEARTBEAT = {

|

||||

'file': entity,

|

||||

'time': timestamp,

|

||||

'is_write': is_write,

|

||||

}

|

||||

|

||||

# process the queue of heartbeats in the future

|

||||

seconds = 4

|

||||

set_timeout(process_queue, seconds)

|

||||

|

||||

|

||||

def process_queue():

|

||||

try:

|

||||

heartbeat = HEARTBEATS.get_nowait()

|

||||

except queue.Empty:

|

||||

return

|

||||

|

||||

has_extra_heartbeats = False

|

||||

extra_heartbeats = []

|

||||

try:

|

||||

while True:

|

||||

extra_heartbeats.append(HEARTBEATS.get_nowait())

|

||||

has_extra_heartbeats = True

|

||||

except queue.Empty:

|

||||

pass

|

||||

|

||||

thread = SendHeartbeatsThread(heartbeat)

|

||||

if has_extra_heartbeats:

|

||||

thread.add_extra_heartbeats(extra_heartbeats)

|

||||

thread.start()

|

||||

|

||||

|

||||

class SendHeartbeatsThread(threading.Thread):

|

||||

"""Non-blocking thread for sending heartbeats to api.

|

||||

"""

|

||||

|

||||

def __init__(self, heartbeat):

|

||||

threading.Thread.__init__(self)

|

||||

self.target_file = target_file

|

||||

self.is_write = is_write

|

||||

self.force = force

|

||||

|

||||

self.debug = SETTINGS.get('debug')

|

||||

self.api_key = SETTINGS.get('api_key', '')

|

||||

self.ignore = SETTINGS.get('ignore', [])

|

||||

self.last_action = LAST_ACTION

|

||||

|

||||

self.heartbeat = heartbeat

|

||||

self.has_extra_heartbeats = False

|

||||

|

||||

def add_extra_heartbeats(self, extra_heartbeats):

|

||||

self.has_extra_heartbeats = True

|

||||

self.extra_heartbeats = extra_heartbeats

|

||||

|

||||

def run(self):

|

||||

if self.target_file:

|

||||

self.timestamp = time.time()

|

||||

if self.force or (self.is_write and not self.last_action['is_write']) or self.target_file != self.last_action['file'] or enough_time_passed(self.timestamp, self.last_action['time']):

|

||||

self.send()

|

||||

"""Running in background thread."""

|

||||

|

||||

def send(self):

|

||||

if not self.api_key:

|

||||

print('[WakaTime] Error: missing api key.')

|

||||

return

|

||||

ua = 'sublime/%d sublime-wakatime/%s' % (ST_VERSION, __version__)

|

||||

cmd = [

|

||||

API_CLIENT,

|

||||

'--file', self.target_file,

|

||||

'--time', str('%f' % self.timestamp),

|

||||

'--plugin', ua,

|

||||

'--key', str(bytes.decode(self.api_key.encode('utf8'))),

|

||||

]

|

||||

if self.is_write:

|

||||

cmd.append('--write')

|

||||

for pattern in self.ignore:

|

||||

cmd.extend(['--ignore', pattern])

|

||||

if self.debug:

|

||||

cmd.append('--verbose')

|

||||

if HAS_SSL:

|

||||

self.send_heartbeats()

|

||||

|

||||

def build_heartbeat(self, entity=None, timestamp=None, is_write=None,

|

||||

cursorpos=None, project=None, folders=None):

|

||||

"""Returns a dict for passing to wakatime-cli as arguments."""

|

||||

|

||||

heartbeat = {

|

||||

'entity': entity,

|

||||

'timestamp': timestamp,

|

||||

'is_write': is_write,

|

||||

}

|

||||

|

||||

if project and project.get('name'):

|

||||

heartbeat['alternate_project'] = project.get('name')

|

||||

elif folders:

|

||||

project_name = find_project_from_folders(folders, entity)

|

||||

if project_name:

|

||||

heartbeat['alternate_project'] = project_name

|

||||

|

||||

if cursorpos is not None:

|

||||

heartbeat['cursorpos'] = '{0}'.format(cursorpos)

|

||||

|

||||

return heartbeat

|

||||

|

||||

def send_heartbeats(self):

|

||||

if python_binary():

|

||||

heartbeat = self.build_heartbeat(**self.heartbeat)

|

||||

ua = 'sublime/%d sublime-wakatime/%s' % (ST_VERSION, __version__)

|

||||

cmd = [

|

||||

python_binary(),

|

||||

API_CLIENT,

|

||||

'--entity', heartbeat['entity'],

|

||||

'--time', str('%f' % heartbeat['timestamp']),

|

||||

'--plugin', ua,

|

||||

]

|

||||

if self.api_key:

|

||||

cmd.extend(['--key', str(bytes.decode(self.api_key.encode('utf8')))])

|

||||

if heartbeat['is_write']:

|

||||

cmd.append('--write')

|

||||

if heartbeat.get('alternate_project'):

|

||||

cmd.extend(['--alternate-project', heartbeat['alternate_project']])

|

||||

if heartbeat.get('cursorpos') is not None:

|

||||

cmd.extend(['--cursorpos', heartbeat['cursorpos']])

|

||||

for pattern in self.ignore:

|

||||

cmd.extend(['--ignore', pattern])

|

||||

if self.debug:

|

||||

print(cmd)

|

||||

code = wakatime.main(cmd)

|

||||

if code != 0:

|

||||

print('[WakaTime] Error: Response code %d from wakatime package.' % code)

|

||||

else:

|

||||

python = python_binary()

|

||||

if python:

|

||||

cmd.insert(0, python)

|

||||

if self.debug:

|

||||

print(cmd)

|

||||

if platform.system() == 'Windows':

|

||||

Popen(cmd, shell=False)

|

||||

else:

|

||||

with open(join(expanduser('~'), '.wakatime.log'), 'a') as stderr:

|

||||

Popen(cmd, stderr=stderr)

|

||||

cmd.append('--verbose')

|

||||

if self.has_extra_heartbeats:

|

||||

cmd.append('--extra-heartbeats')

|

||||

stdin = PIPE

|

||||

extra_heartbeats = [self.build_heartbeat(**x) for x in self.extra_heartbeats]

|

||||

extra_heartbeats = json.dumps(extra_heartbeats)

|

||||

else:

|

||||

print('[WakaTime] Error: Unable to find python binary.')

|

||||

extra_heartbeats = None

|

||||

stdin = None

|

||||

|

||||

log(DEBUG, ' '.join(obfuscate_apikey(cmd)))

|

||||

try:

|

||||

process = Popen(cmd, stdin=stdin, stdout=PIPE, stderr=STDOUT)

|

||||

inp = None

|

||||

if self.has_extra_heartbeats:

|

||||

inp = "{0}\n".format(extra_heartbeats)

|

||||

inp = inp.encode('utf-8')

|

||||

output, err = process.communicate(input=inp)

|

||||

output = u(output)

|

||||

retcode = process.poll()

|

||||

if (not retcode or retcode == 102) and not output:

|

||||

self.sent()

|

||||

else:

|

||||

update_status_bar('Error')

|

||||

if retcode:

|

||||

log(DEBUG if retcode == 102 else ERROR, 'wakatime-core exited with status: {0}'.format(retcode))

|

||||

if output:

|

||||

log(ERROR, u('wakatime-core output: {0}').format(output))

|

||||

except:

|

||||

log(ERROR, u(sys.exc_info()[1]))

|

||||

update_status_bar('Error')

|

||||

|

||||

else:

|

||||

log(ERROR, 'Unable to find python binary.')

|

||||

update_status_bar('Error')

|

||||

|

||||

def sent(self):

|

||||

update_status_bar('OK')

|

||||

|

||||

|

||||

def download_python():

|

||||

thread = DownloadPython()

|

||||

thread.start()

|

||||

|

||||

|

||||

class DownloadPython(threading.Thread):

|

||||

"""Non-blocking thread for extracting embeddable Python on Windows machines.

|

||||

"""

|

||||

|

||||

def run(self):

|

||||

log(INFO, 'Downloading embeddable Python...')

|

||||

|

||||

ver = '3.5.2'

|

||||

arch = 'amd64' if platform.architecture()[0] == '64bit' else 'win32'

|

||||

url = 'https://www.python.org/ftp/python/{ver}/python-{ver}-embed-{arch}.zip'.format(

|

||||

ver=ver,

|

||||

arch=arch,

|

||||

)

|

||||

|

||||

if not os.path.exists(resources_folder()):

|

||||

os.makedirs(resources_folder())

|

||||

|

||||

zip_file = os.path.join(resources_folder(), 'python.zip')

|

||||

try:

|

||||

urllib.urlretrieve(url, zip_file)

|

||||

except AttributeError:

|

||||

urllib.request.urlretrieve(url, zip_file)

|

||||

|

||||

log(INFO, 'Extracting Python...')

|

||||

with contextlib.closing(ZipFile(zip_file)) as zf:

|

||||

path = os.path.join(resources_folder(), 'python')

|

||||

zf.extractall(path)

|

||||

|